Generally, when I'm analyzing MySQL Performance on Linux with "localhost" test workloads, I'm configuring client connections to use IP port (loopback) to connect to MySQL Server (and not UNIX

socket) -- this is still at least involving IP stack in the game, and if something is going odd on IP, we can be aware ahead about. And indeed, it already helped several times to discover such kind

of problems even without network links between client/server (like this one, etc.). However, in the past we also observed a pretty significant difference in QPS results when IP port was used comparing to UNIX

socket (communications via UNIX socket were going near 15% faster).. Over a time with newer OL kernel releases this gap became smaller and smaller. But in all such cases it's always hard to say if

the gap was reduced due OS kernel / IP stack improvements, or it's just because MySQL Server is hitting new scalability bottlenecks ;-))

Anyway, I've still continued to use IP port in all my tests until now. But recently the same discussion about IP port impact -vs- UNIX socket came up again in other investigations, and I'd like

to share the results I've obtained on different HW servers with MySQL 8.0 GA release.

The test workload is the most "aggressive" for client/server ping-pong exchange -- Sysbench point-selects (the same test scenario which initially already reported 2.1M QPS on MySQL 8.0) -- but this time on 3 different servers :

-

4CPU Sockets (4S) 96cores-HT Broadwell

-

2CPU Sockets (2S) 48cores-HT Skylake

-

2CPU Sockets (2S) 44cores-HT Broadwell

and see how different the results will be when exactly the same test is running via IP port / or UNIX socket.

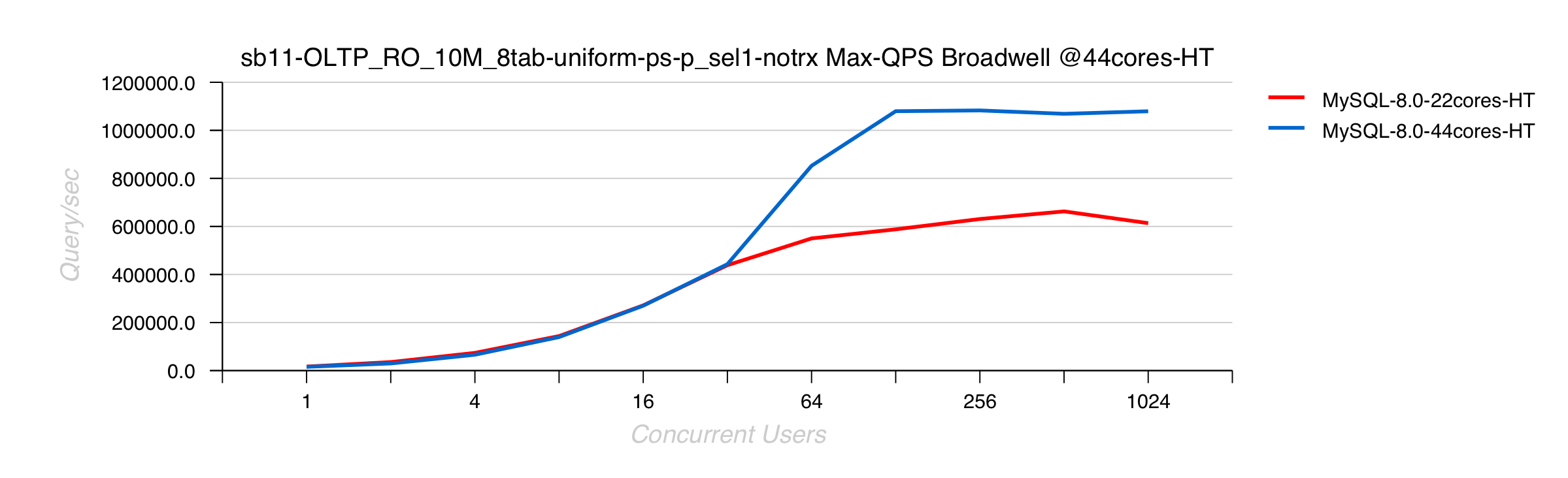

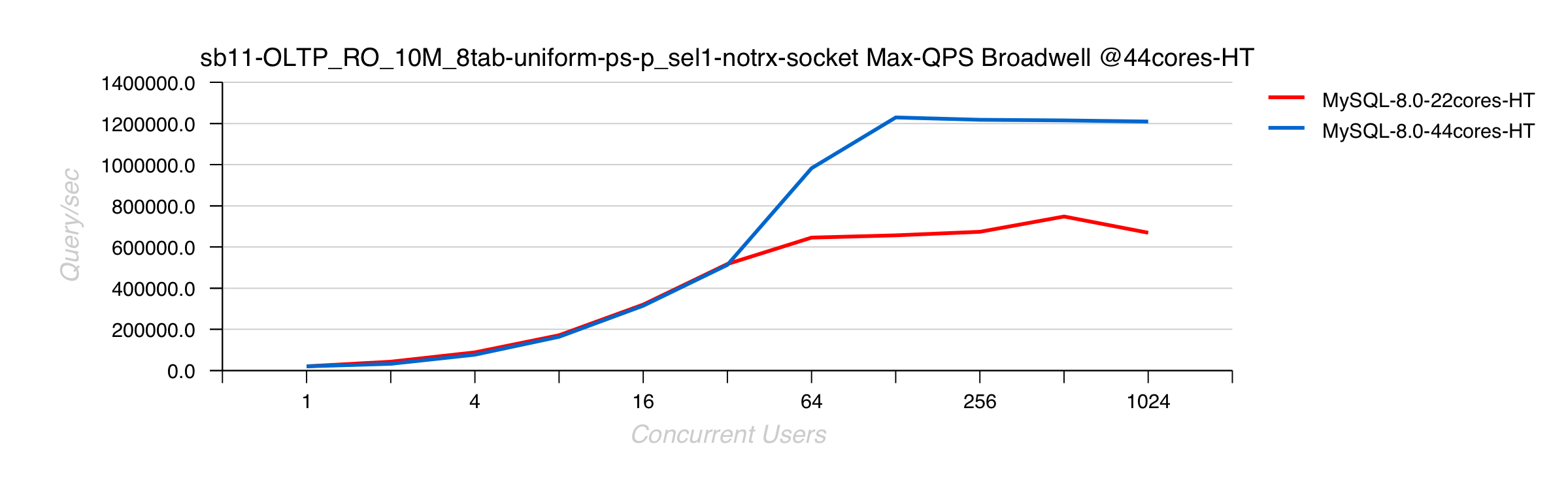

Broadwell 2S 44cores-HT

- IP port :

- UNIX socket :

Comments :

-

wow, up to 18% difference !

-

and you can clearly see MySQL 8.0 out-passing 1.2M QPS with UNIX socket, and staying under 1.1M QPS with IP port..

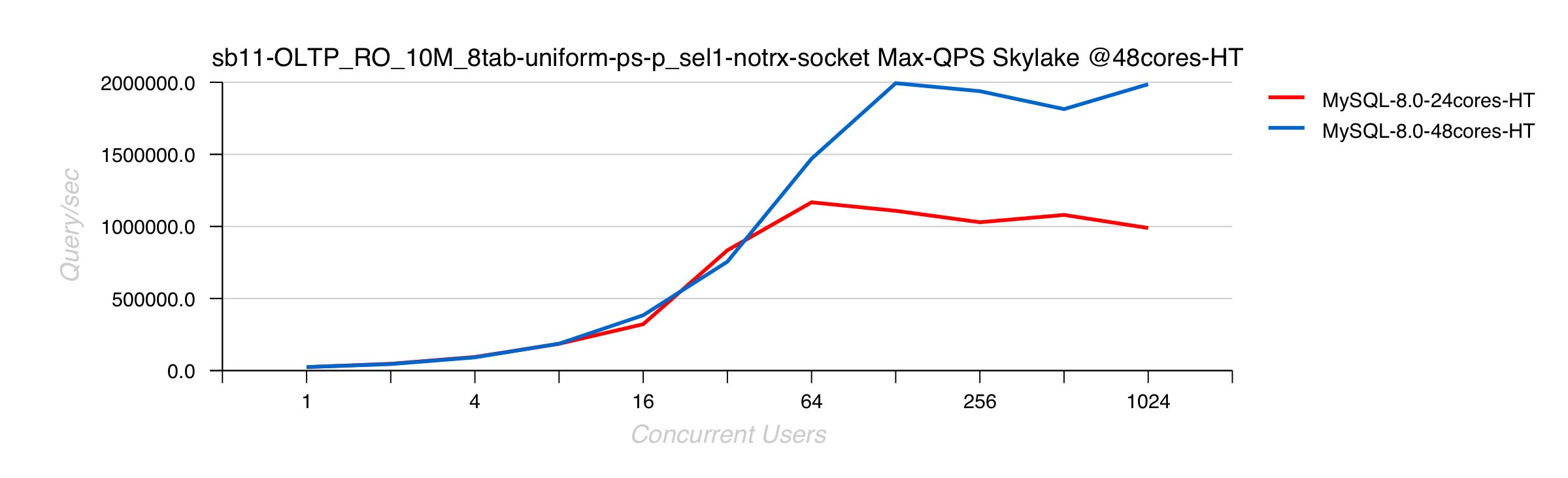

Skylake 2S 48cores-HT

- IP port :

- UNIX socket :

Comments :

-

interesting.. -- we're reaching now 2M QPS using UNIX socket !

-

and over 1.8M QPS with IP port as observed before in the same test) - 1.88M to be exact..

-

which is giving only 6% difference between these Max QPS results

-

while on lower load levels the difference is going up to 19% (!!)

-

so, there is definitively something to re-investigate again and consider..

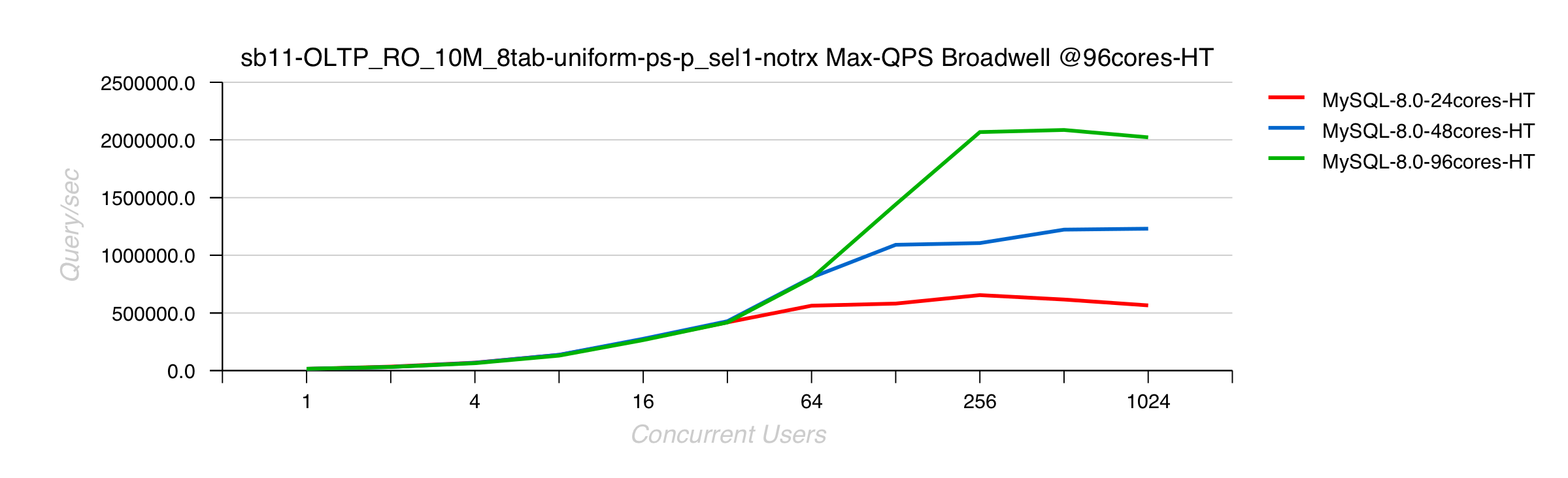

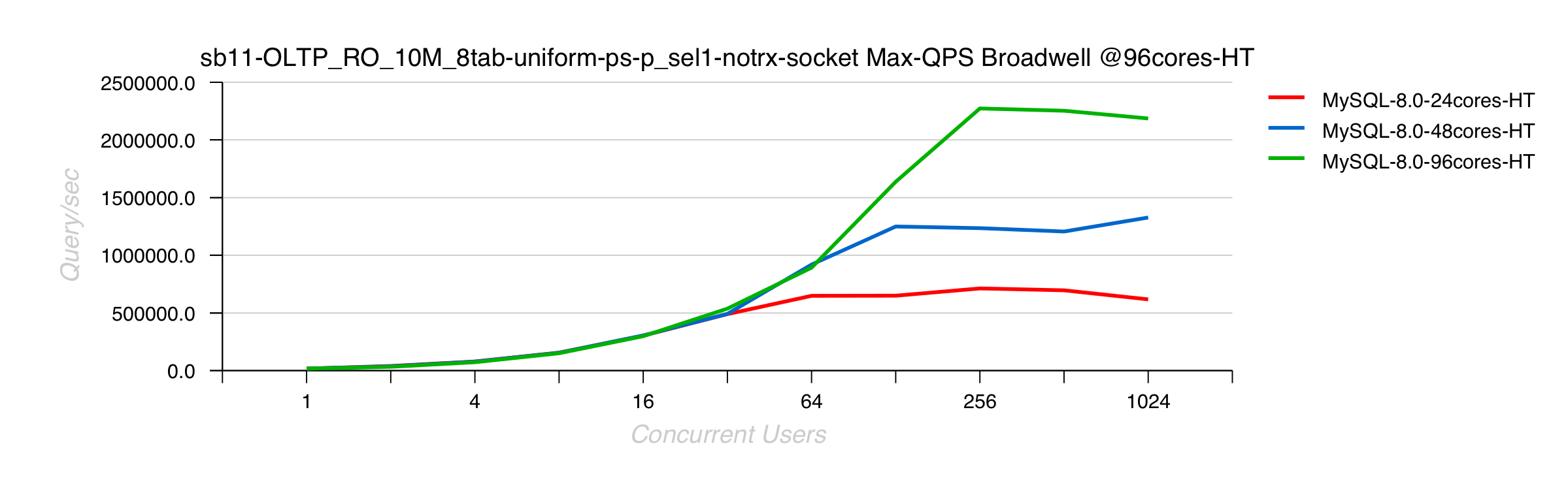

Broadwell 4S 96cores-HT

- IP port :

- UNIX socket :

Comments :

-

again, 2.25M QPS reached here with UNIX socket ! ;-)

-

so, only 7% difference -vs- 2.1M QPS obtained with IP port

-

however, 29% (!!) difference (417K -vs- 536K) on 32 users load level..

-

indeed, there is definitively something ;-))

But why on high load we're not seeing such a big difference ?.. -- one of the explanation is a higher overhead we have now at least from open_table() code which is involved on every query, and on

2M QPS rate it becomes pretty visible.

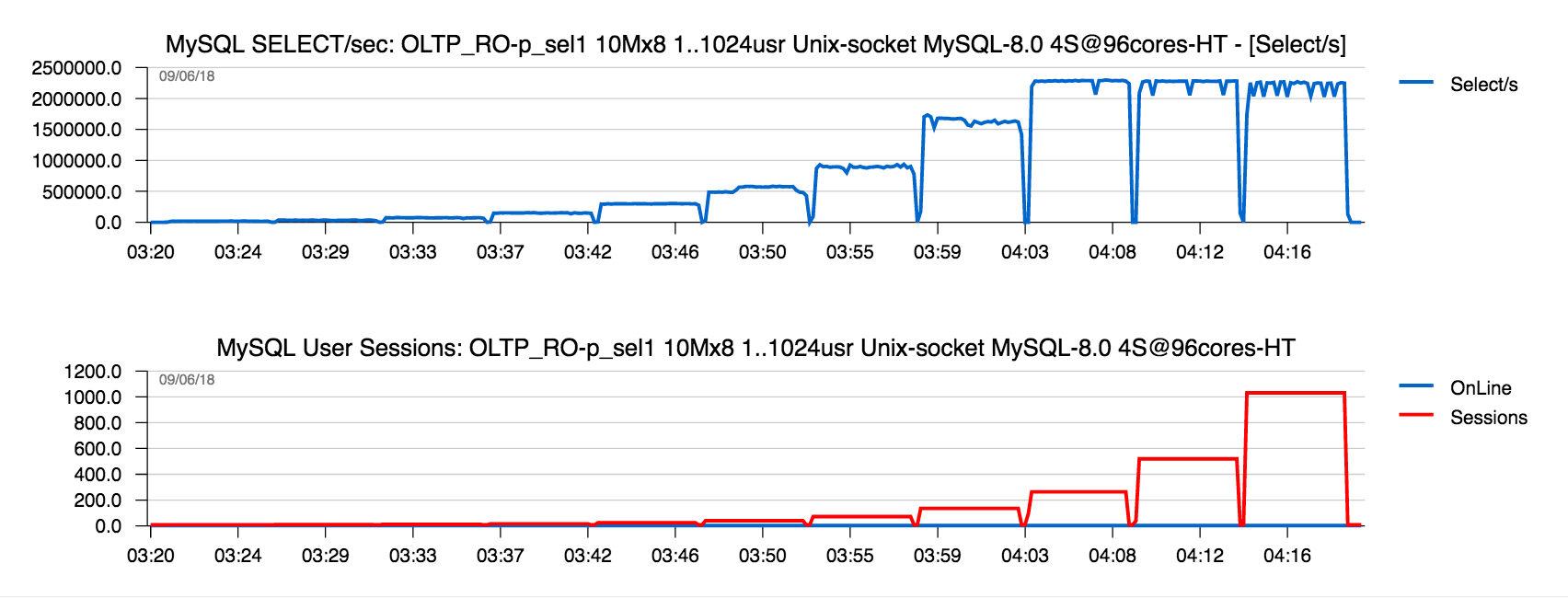

The following graph is representing Sysbench point-select workload in time line -- the load is starting with 1 user, then 2 users, 4, .. and 1024 :

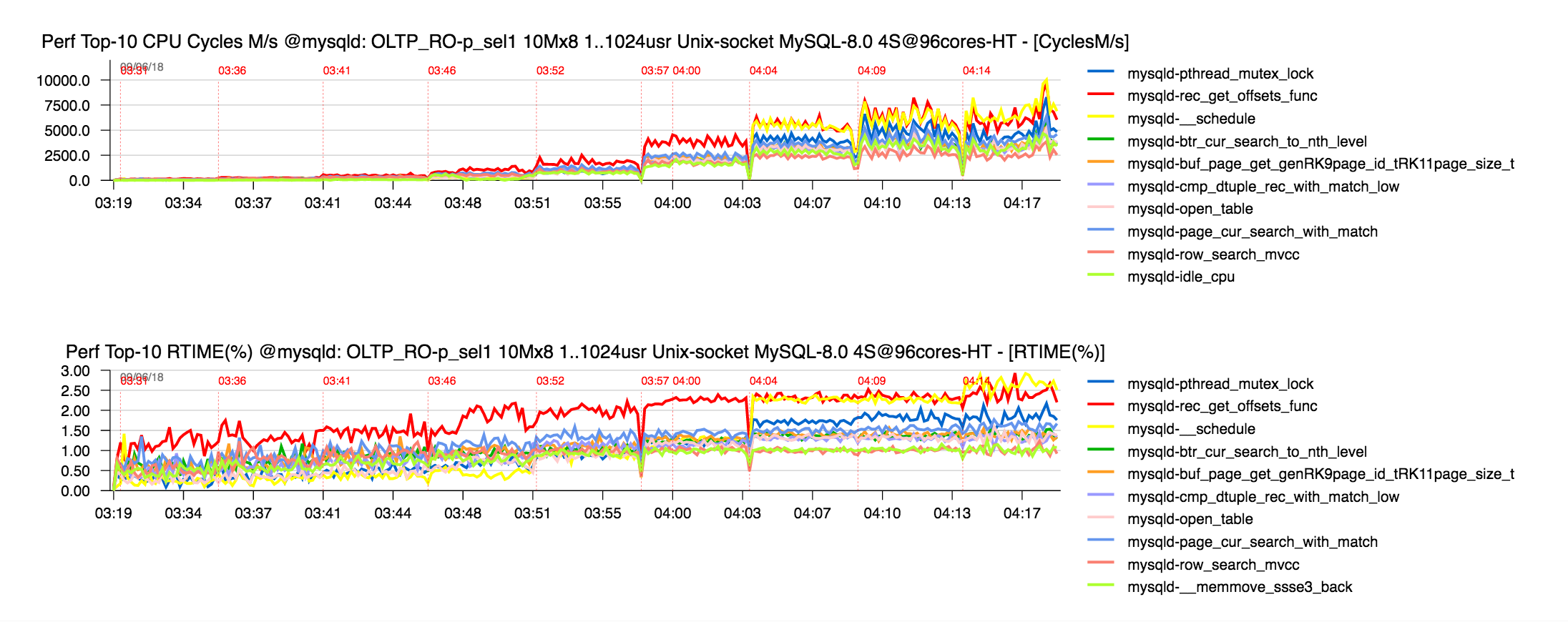

and the next graph is tracing the most hot functions reported by "perf" profiler since the load level becomes high enough (4 - 1024 users) :

as you can see, open_table() is going up with a growing load, and growing pthread_mutex_lock() times (involved from open_table()) are pointing on a real bottleneck here..

While it's clear as well that some other part of code are also willing more love.. -- if we only could have all the time we need to fix all we want ;-))

INSTEAD OF SUMMARY

-

indeed, there may be a huge difference if you use IP port or UNIX socket for your local connections.. -- and this is also something definitively to investigate for our OL Team ;-))

-

our best result on 2S HW is now moving to 2M QPS ;-))

-

our biggest ever result is 2.25M QPS, but I'm pretty sure on 4S Skylake it'll be even more bigger than on the current 4S Brodwell server ;-))

-

but this is not in scope of our priorities, as there are much more other more critical issues to fix + new features to deliver..

Thank you for using MySQL ! -- stay tuned ;-))

Rgds,

-Dimitri