Initial high network traffic was observed due CURSOR usage in db_STRESS code. Of course fetch row by row is not an optimal way to get SELECT results as fast as possible :) Specially if we want to compare apples to apples - CURSOR was not used for MySQL test implementation. So, I just modified my code to work without CURSOR and network traffic became reasonable...

Sync Method choice is quite interesting in PostgreSQL. After trying all of them I sticked with 'open_datasync' as with the most optimal...

High I/O activity really impressed me - at least I was ready to believe all transactions are *really* committed seeing number of disk write I/O operations out-passed or equal to reported by db_STRESS WRITE transactions! To get even more high throughput on my storage I disabled cache mirror between 2 controllers (as we use only one fiber channel it changes nothing on security, but gives a significant speed-up due lower I/O latency)

Deadlocks appearance really killed me... Despite me, but I was far to imagine that 64 concurrent sessions randomly selecting object ID will still have a big chance to access to the *same* ID on the *same* time!... Well, as my goal was not to make things even worse with artificial locking - I've just adapted db_STRESS Injector to increase ID dispersing and ensure each session staying in its own ID list enclosure. If before the it was only: 'ID = random() % MAX_OBJECT_ID + 1', I added following: 'ID= ID - ID % Numb_of_sessions + Session_ID'. However, few questions are still open:

- why do I have deadlocks here? every SQL statement is auto-committed, so cannot lock itself anything else rather currently updated/deleted/inserted row. I've tried to reproduce it manually with several 'plsql' connections - no luck, everything works just fine: session locking row just blocking other and no deadlock happens... Well, I need to get more knowledge in subject to analyze it more in details but next time :))

- NOTE: if I disable INSERT/DELETE in WRITE transaction and do SELECT and UPDATE only - deadlocks disappearing...

- next, why I did not see deadlocks with MySQL?... Is it normal or wrong? Anyway, accessing same data concurrently may slow down or speed-up performance as well, but how to know? just by testing :)

Well, to stay honest till the end, after all these improvement on I/O level and integrating Anti-DeadLock solution, I tried to rerun, of course, previous MySQL tests at least the most hard "Stress RW=1". And yes, there is a difference - MySQL performance level became even worse!... And as it was already bad - I don't want to enforce it, let's keep previously obtained results for MySQL and go ahead with PostgreSQL! :)

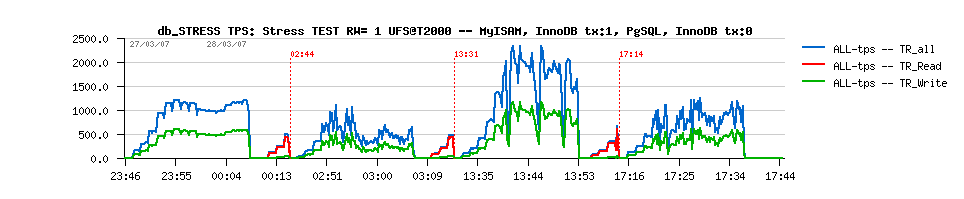

Anyway, following are some RW=1 activity snapshots from various probe tests...

Well, initially I wanted to test the same PostgreSQL version but compiled with Sun compiler and generatin 64bit binaries (Sun Studio 11). However, 64bit version showed me 7 errors in 'make check' test and probably hide some potential errors. So I decided to stay with 32bit binaries, but at least well optimized for SPARC platform. All check tests finished well so far, and as database gave impression to run really faster, to differentiate it from the previous one I just called it 'pgsql-FF' (Fast & Furious) - at least I hope so :))

IMPORTANT! previous tests went so well that I discovered another bottleneck on V890 server - its supposed Gbit network card became a simple 100Mbit interface! It's too late to repair it now, but need keep in mind - while V890 is sticking on some performance level need to check network load first! :)

Conditions:

- PostgreSQL: v8.2.3 32bit FF (Fast & Furious :))

- V890 4CPU US4+ 1800Mhz

- T2000 UST1 8cores 1200Mhz

- Volumes: 1M, 10M objects

- Direct=OFF

- fsync=ON

- wal_sync_method=open_datasync

- autovacuum=OFF