This article is inspired by Percona blog post comparing MySQL 8.0 and Percona Server 5.7 on IO-bound

workload with Intel Optane storage. There are several claims made by Vadim based on a single test case, which is simply unfair. So, I'll try to clarify this all based on more test results and more

tech details..

But before we start, some intro :

InnoDB Parallel Flushing -- was introduced with MySQL 5.7 (as a single-thread flushing could no more follow), and implemented as dedicated parallel threads

(cleaners) which are involved in background once per second to do LRU-driven flushing first (in case there is no more or too low amount of free pages) and then REDO-driven flushing (to flush the

oldest dirty pages and allow more free space in REDO). The amount of cleaners was intentionally made configurable as there were many worries that these threads will use too much CPU ;-)) -- but at

least configuring their number equal to number of your Buffer Pool (BP) Instances was resulting in nearly the same as if you have dedicated cleaner-per-BP-instance.

Multi-threaded LRU Flusher -- was introduced in Percona Server 5.7, implementing dedicated LRU cleaners (one thread per BP instance) independently running in background. The real valid

point in this approach is to keep LRU cleaners independent to so called "detected activity" in InnoDB (which was historically always buggy), so whatever happens, every LRU cleaner remains active to

deliver free pages according the demand. While in MySQL 5.7 the same was expected to be covered by involving "free page event" (not what I'd prefer, but this is also historical to InnoDB). However,

on any IO-bounded workload I've tested with MySQL 5.7 and 8.0 by configuring 16 BP instances with 16 page cleaners and with LRU depth setting matching the required free page rate -- I've never

observed lower TPS comparing to Percona..

Single Page Flushing -- historically, in InnoDB when a user thread was not able to get a free page for its data, it was involving a "single page flush" itself, expecting to get a free page

sooner -- the motivation behind such an approach was "better to try to do something than just do nothing". And this was blamed so often.. -- while, again, it's largely exaggerated, because the only

real problem here is coming due a historical "limited space" for single page flush in DoubleWrite Buffer, and that's all. To be honest, making this option configurable could allow anyone to evaluate

it very easily and decide to keep it ON or not by his own results ;-))

DoubleWrite Buffer -- probably one of the biggest historical PITA in InnoDB.. -- the feature is implemented to guarantee page "atomic writes" (e.g. to avoid partially written pages, each

page is written first to DoubleWrite (DBLWR) place, and only then to its real place in data file). It was still "good enough" while storage was very slow, but quickly became a bottleneck on faster

storage. However, such a bottleneck you could not observe on every workload.. -- despite you have to write your data twice, but as long as your storage is able to follow and you don't have waits on

IO writes (e.g. not on REDO space nor on free pages) -- your overall TPS will still not be impacted ;-)) The impact is generally becomes visible since 64 concurrent users (really *concurrent*, e.g.

doing things on the same time). Anyway, we addressed this issue yet for MySQL 5.7, but our fix arrived after GA date, so it was not delivered with 5.7 -- on the same time Percona delivered their

"Parallel DoubleWrite", solving the problem for Percona Server 5.7 -- lucky guys, kudos for timing ! ;-))

Now, why we did NOT put all these points on the first priority for MySQL 8.0 release ?

-

there is one main thing changes since MySQL 8.0 -- for the first time in MySQL history we decided to move to "continuous release" model !

-

which means that we may still deliver new changes with every update ;-))

-

(e.g. if the same was possible with 5.7, the fix for DBLWR would be already here)

-

however, we should be also "realistic" as we cannot address fundamental changes in updates..

-

so, if any fundamental changes should be delivered, they should be made before GA deadline

-

and the most critical from such planned changes was our new REDO log implementation !

-

(we can address DBLWR and other changes later, but redesigning REDO is much more complex story ;-))

-

so, yes, we're aware about all the real issues we have, and we know how to fix them -- and it's only a question of time now..

So far, now let's see what of the listed issues are real problems and how much each one is impacting ;-))

For my following investigation I'll use :

-

the same 48cores-HT 2S Skylake server as before

-

x2 Optane drives used together as a single RAID-0 volume via MDM

-

same OL7.4, EXT4

-

Sysbench 50M x 8-tables data volume (same as I used before, and then Vadim)

-

Similar my.conf but with few changes :

-

trx_commit=1 (flush REDO on every COMMIT as before)

-

PFS=on (Performance Schema)

-

checksums=on (crc32)

-

doublewrite=off/on (to validate the impact)

-

binlog=off/on & sync_binlog=1 (to validate the impact as well)

Test scenarios :

-

Concurrent users : 32, 64, 128

-

Buffer Pool : 128GB / 32GB

-

Workload : Sysbench OLTP_RW 50Mx8tab (100GB)

-

Config variations :

-

1) base config + dblwr=0 + binlog=0

-

2) base config + dblwr=1 + binlog=0

-

3) base config + dblwr=0 + binlog=1

-

4) base config + dblwr=1 + binlog=1

-

where base config : trx_commit=1 + PFS=on + checksums=on

NOTE : I did not build for all the following test results "user friendly" charts -- all the graphs are representing real TPS (Commit/sec) stats collected during the tests, and matching 3 load

levels : 32, 64, and 128 concurrent users.

128GB BUFFER POOL

So far, let's start first with Buffer Pool =128GB :

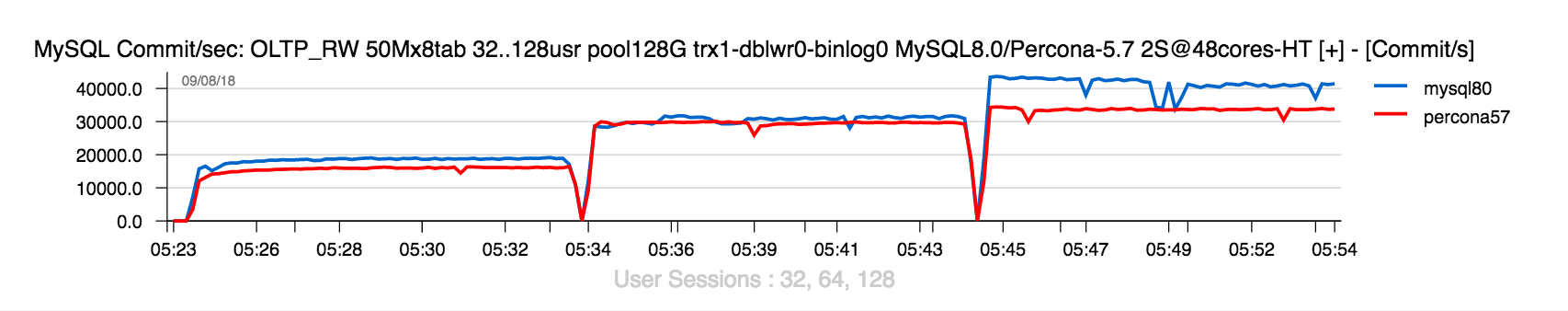

OLTP_RW-50Mx8tab | BP=128G, trx=1, dblwr=0, binlog=0

Comments :

-

with Buffer Pool (BP) of 128GB we keep the whole dataset "cached" (so, no IO reads)

-

NOTE : PFS=on and checksums=on -- while TPS is mostly the same as in the previous test on the same data volume (where both PFS & checksums were OFF), they are not impacting here

-

as well from the previous test you can see that even if the data are fully cached in BP, there is still an impact due used storage -- the result on Intel SSD was way lower than on Intel Optane

-

so, in the current test result you can see that MySQL 8.0 also getting better benefit from a faster storage comparing to Percona Server, even if the given test is mostly about REDO related improvements ;-))

And now, let's switch DobleWrite=ON :

OLTP_RW-50Mx8tab | BP=128G, trx=1, dblwr=1, binlog=0

Comments :

-

amazing, but enabling DoubleWrite (DBLWR) is not impacting TPS on MySQL 8.0

-

and it's rather opposite -- TPS rate is going slightly higher !

-

this "false gain" is only coming here because data page writes are going slower due DBLWR code bottleneck, so REDO writes are going faster, and this we can only understand if we testing both cases (with and without DBLWR enabled)..

-

see the same observation in the previously tested TPCC workload as well

So far, let's additionally enable Binlog now :

OLTP_RW-50Mx8tab | BP=128G, trx=1, dblwr=1, binlog=1

Comments :

-

indeed, the Binlog impact here is really huge..

-

this is not new either, and MySQL 8.0 performance is impacted by Binlog much more harder

Let's get now an all-in-one picture related to these tests for MySQL 8.0 :

OLTP_RW-50Mx8tab MySQL-8.0 | BP=128G

Comments :

-

as you can see, there is no impact on TPS if DBLWR is enabled

-

however, the Binlog impact is severe, with or without DBLWR

-

you can find few more details about Binlog impact in MySQL 8.0

-

from the other side, all those guys who blamed me to not having DBLWR enabled in the previous test results -- do you realize now that these results were in fact the most honest ?.. -- because by enabling DBLWR they would be better than previously published, which would be simply unfair ;-))

And all-in-one picture with Percona Server 5.7 now :

OLTP_RW-50Mx8tab Percona Server-5.7 | BP=128G

Comments :

-

all looks similar, except there is no "gain" when DBLWR is enabled

-

(and this is at least "as expected", and once DBLWR will be fixed in 8.0, this "gain" will finally gone in 8.0 too)

Ok, this all was rather a "preface" to show you that "everything is relative", and impact of every thing is directly depending on workload..

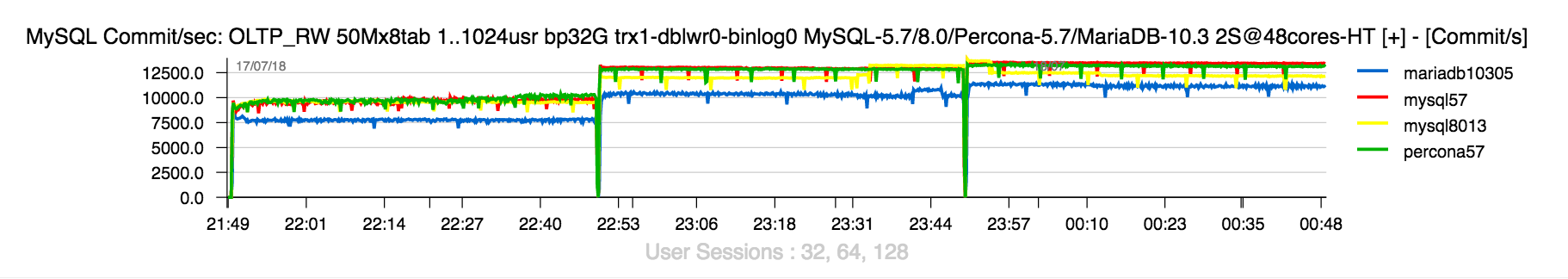

32GB BUFFER POOL

Now, let's replay the same test conditions, but with BP = 32GB (as it was used in my and Vadim's tests).

NOTE :

-

in the following tests each load level was executed for 1H (Vadim reported some TPS drops in 8.0 over a time, so I wanted to be sure we did not miss something and can reproduce/address the issue (if any) -- "unfortunately", I did not meet any such a drop on any of 3 tested load levels)

-

I've also added into the game MySQL 5.7 and MariaDB 10.3 to complete the puzzle

-

as well I was surprised in Vadim's results to see MySQL 8.0 to do some time better than MySQL 5.7, while in the given test conditions there is no any reason for this -- it's a heavy IO-bound, and for the moment DBLWR bottleneck in 8.0 is the same as in 5.7

Let's get a look on the fist test case, when both DBLWR and Binlog are switched OFF :

OLTP_RW-50Mx8tab | BP=32G, trx=1, dblwr=0, binlog=0

Comments :

-

as you can see, on all 3 load levels (32, 64, and 128 concurrent users) there is NO any difference in TPS between MySQL 5.7 and Percona Server 5.7

-

indeed, MySQL 8.0 is doing slightly less TPS here for some strange reasons (I need to investigate it yet little bit more, as it also can do slightly higher TPS in the same test, but I don't want to claim it's doing better until it'll not do it all the time ;-))

-

however, the most important point here is about "MT flusher" and "Single page flush" -- they are absolutely NOT impacting overall transactional performance ! -- I don't despite here if the feature is right or wrong, I'm just proving you that even with initial parallel flushing design since MySQL 5.7 and still with user threads doing single page flush in 5.7/8.0 (yes, it's not zero) -- you may still reach the best possible performance in your IO-bound workload ! (and again, it's on very fast Intel Optane ! -- while it's much more straightforward on a slower flash storage)..

Things are radically changing since we enable DBLWR :

OLTP_RW-50Mx8tab | BP=32G, trx=1, dblwr=1, binlog=0

Comments :

-

on 32 concurrent users there is still no (yet) any TPS difference between MySQL 5.7/8.0 and Percona 5.7

-

the difference becomes visible since 64 users (something like 20%)

-

and then it's extremely visible on 128 users !

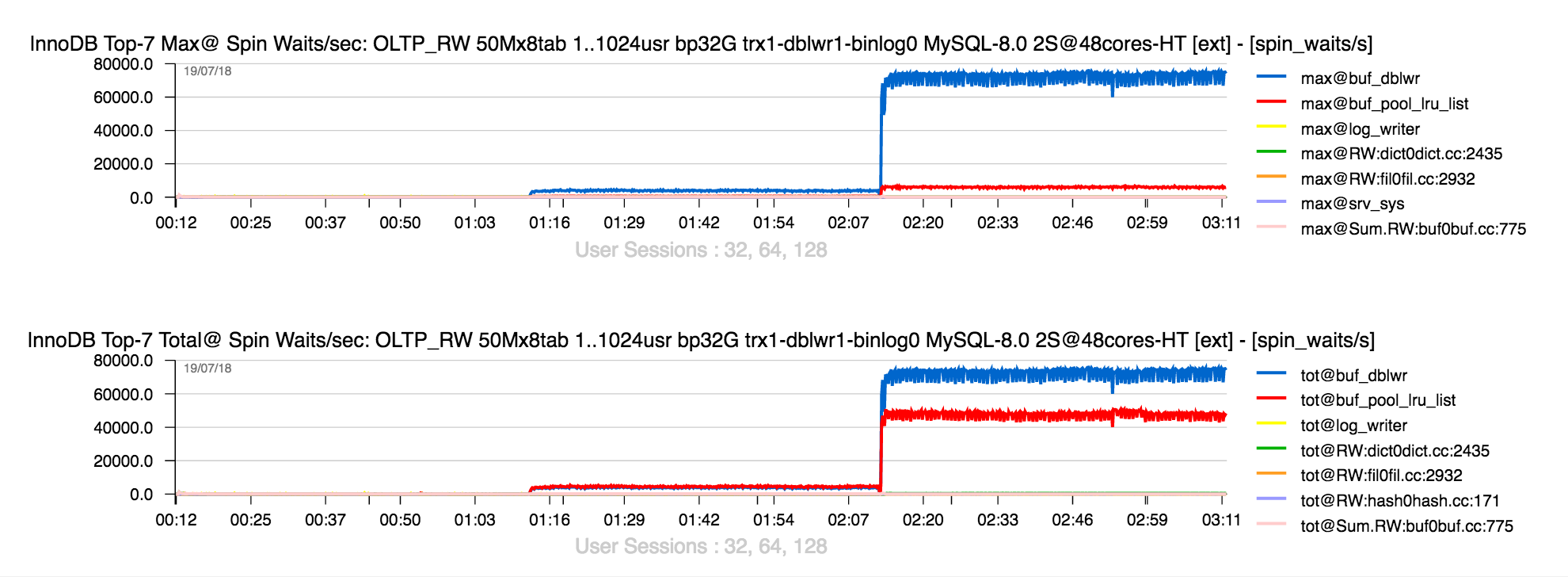

And this is due a know problem in the old DBLWR design hitting contention on its buffer lock :

Once DBLWR code will be fixed in MySQL 8.0 -- you'll not see it anymore, and TPS level will be definitively much higher, no doubt ! While on the same time I'm curious :

-

why publish only 128usr load result ?

-

did not you blame us every time to be focused only on high loads ? ;-))

-

here is 32usr results, all is fine, no ? ;-))

-

this was exactly the reason why fixing DBLWR was not the #1 priority for MySQL 8.0 GA timeframe !

-

because as poor as it is, DBLWR may still NOT impact your performance in non-IO-bound workloads (when your active dataset is cached in BP), and still NOT impact your IO-bound workload up to 32 concurrent users (*concurrent* is the key here, as you can have over 10K *connected* users, and only few of them are really concurrent on the same time)..

-

yes, since 64usr load level you may observe TPS regression.. -- but again, this is still only possible with a really fast flash storage (like Intel Optane)

-

wasn't it much more important to fix our regression on UPDATE performance which came with MySQL 5.7 ? -- huge negative gap comparing to MySQL 5.6, and now it's x2 times better with MySQL 8.0 ! -- BTW, Percona Server 5.7 is as bad as MySQL 5.7 here, right ? -- but who cares..

-

indeed, whatever you do, someone will still find a reason to blame you ;-))

But well, let's get a look now on the Binlog impact itself (with DBLWR=off) :

OLTP_RW-50Mx8tab | BP=32G, trx=1, dblwr=0, binlog=1

Comments :

-

Binlog is impacting here much less than in the previous tests with 128GB BP

-

and this is due the nature of Binlog impact -- it's not due Binlog writes, but due some waits in Binlog code..

-

while on IO-bound workload these waits are less visible..

-

same TPS here on MySQL 5.7/8.0 and Percona Server 5.7

And now combined with DBLWR :

OLTP_RW-50Mx8tab | BP=32G, trx=1, dblwr=1, binlog=1

Comments :

-

TPS goes mostly to the levels where is was before with DBLWR=on only

-

CONCLUSION : All this story is only about InnoDB DoubleWrite, and nothing else !

Now : the given IO-bound workload is fully saturating x2 Optane drives, we're hitting over 4000MB/sec overall throughput rate.. -- but the goal for any production system is NOT to do IO for IO..

We can use any fast flash storage much more efficiently by configuring smaller page size for InnoDB !

4K PAGE SIZE

I've already explained in details why using 4K page size in IO-bound OLTP workloads may be much more efficient even comparing to "compression-oriented" solutions, which is allowed us to deliver

1M QPS on IO-bound SELECTs ! As well this is also delivered a pretty good speed-up

on TPCC workload results even on as low storage. But now let's see what will change if we will more to 4K

page in a given workload.

The first result is with both DBLWR and Binlog switched OFF :

OLTP_RW-50Mx8tab-page4K | BP=32G, trx=1, dblwr=0, binlog=0

Comments :

-

20K TPS with MySQL 8.0 on 128usr load level !!!

-

isn't awesome ?? ;-))

-

this was possible due fixed in 8.0 historical scalability limitation in InnoDB on IO operations !

-

who said MySQL 8.0 was not ready for fast flash storage ? ;-))

-

NOTE : in the given workload on 20K TPS level we're delivering 750K (!!) IO op/sec, and NOT yet saturating Optane drives !

-

(however, we're now saturating CPUs.. -- sounds odd "CPU-bound IO-bound", but it's our today's reality and near future, which is coming with more and more fast flash storage + yet more fast NVRAM.. -- there will be a lot of fun ahead ;-))

And now with DBLWR enabled :

OLTP_RW-50Mx8tab-page4K | BP=32G, trx=1, dblwr=1, binlog=0

Comments :

-

indeed, DBWLR is impacting..

-

on the same time, even with DBLWR=on, with 4K page on MySQL 8.0 we're getting up to 64 users the same TPS as it was with default 16K page, but with DBLWR switched off ! ;-))

-

also, pretty sure by tuning InnoDB thread concurrency (set it to 64 for ex.) we can avoid TPS drop on 128 users (but I'm skipping this here ;-))

Now DBLWR=off, but Binlog=on :

OLTP_RW-50Mx8tab-page4K | BP=32G, trx=1, dblwr=0, binlog=1

Comments :

-

Binlog is impacting again, but not that much as in initial tests with 128GB BP

-

in fact as soon as processing activity becomes more CPU-bound -- we're hitting Binlog waits..

And now with both DBLWR and Binlog are enabled :

OLTP_RW-50Mx8tab-page4K | BP=32G, trx=1, dblwr=1, binlog=1

Comments :

-

as expected, the biggest impact is still coming from DBLWR

-

and I'm very curious to see how the picture will change once DBLWR code will be fixed in MySQL 8.0

INSTEAD OF SUMMARY

-

the main impact in the given IO-bound OLTP_RW workload is only DBLWR and nothing else !

-

and again, if your workload has more than 32 *concurrent* users sessions + using a very fast flash storage..

-

so far, impatient to see DBLWR fixed in MySQL 8.0 ;-))

-

using 4K page size is absolutely to consider for any IO-bound workloads !

-

NOTE : every Linux vendor today is claiming that 4K IO writes in Linux are *atomic* ! -- and if this is really true for your platform, then you can safely disable DBLWR if you're using 4K page and already reach x2 times higher TPS with MySQL 8.0 today in the given IO-bound OLTP_RW or any similar ! ;-)) -- the same x2 times higher TPS was also observed on IO-bound TPCC even with an old SSD drive !

-

while if your workload is not IO-bound (having active dataset mostly cached in BP, none or very low IO reads) -- then DBLWR is not your main impact ! -- you may always tune your MySQL instance to make it mostly "invisible"..

-

Binlog -- is the main impact in this case.. Unfortunately it's another old historical PITA in MySQL Server, and it's largely a time now to get it fixed (or come with a more advanced alternative).. -- "nature is always finding its way", so let's see..

-

no comments on MariaDB 10.3 performance.. -- but a good live example that just copying InnoDB code from MySQL 5.7 is not enough to get it running right..

SIDE NOTE : the progress in MySQL Development today is the most impressive than ever ! -- but of course, it's still way far from "perfect", and who knows when this perfect level will be

reached.. -- but as usual, everything is relative ;-)) When I'm looking back and revisiting benchmark results from the previous MySQL versions it's like a day and night ;-)) And it's not only about

benchmarks.. Work in progress ! and much more good surprises yet to come !

Thank you for using MySQL ! -- stay tuned ;-))

Rgds,

-Dimitri

Appendix : my.conf

[mysqld] # general ssl=0 server_id=5400 performance_schema=on max_connections=4000 back_log=1500 table_open_cache=8000 table_open_cache_instances=16 max_prepared_stmt_count=512000 default_password_lifetime=0 default_authentication_plugin=mysql_native_password character_set_server=latin1 collation_server=latin1_swedish_ci skip-character-set-client-handshake transaction_isolation=REPEATABLE-READ # files innodb_file_per_table innodb_log_file_size=1024M innodb_log_files_in_group=32 innodb_open_files=4000 # buffers innodb_buffer_pool_size=128000M / 32000M innodb_buffer_pool_instances=16 innodb_log_buffer_size=64M # tune innodb_thread_concurrency=0 innodb_max_dirty_pages_pct=90 innodb_max_dirty_pages_pct_lwm=10 join_buffer_size=32K sort_buffer_size=32K innodb_use_native_aio=1 innodb_stats_persistent=1 innodb_spin_wait_delay=6 innodb_max_purge_lag_delay=300000 innodb_max_purge_lag=0 innodb_flush_method=O_DIRECT innodb_io_capacity=10000 innodb_io_capacity_max=40000 innodb_lru_scan_depth=9000 innodb_change_buffering=none innodb_read_only=0 innodb_page_cleaners=16 innodb_undo_log_truncate=off # data protection innodb_flush_log_at_trx_commit=1 innodb_doublewrite = 0 | 1 innodb_checksum_algorithm=crc32 # binlog-ON # --------------------- log_bin=binlog sync_binlog=1 binlog_format=ROW binlog_row_image=minimal # binlog-OFF # --------------------- ##skip_log_bin=1 # perf special innodb_adaptive_flushing=1 innodb_flush_neighbors=0 innodb_read_io_threads=16 innodb_write_io_threads=16 innodb_purge_threads=4 innodb_adaptive_hash_index=0 # monitoring innodb_monitor_enable='%'