« MySQL Performance: InnoDB Buffer Pool Instances in 5.6 | Main | MySQL Performance: Linux I/O and Fusion-IO, Part #2 »

Tuesday, 23 October, 2012

MySQL Performance: Linux I/O and Fusion-io

This article is following the previously

published investigation about I/O limitations on Linux and also

sharing my data from the steps in investigation of MySQL/InnoDB I/O

limitations within RW workloads..

So far, I've got in my hands a

server with a Fusion-io card and I'm expecting now to analyze more in

details the limits we're hitting within MySQL and InnoDB on heavy

Read+Write workloads. As the I/O limit from the HW level should be way

far due outstanding Fusion-io card performance, contentions within

MySQL/InnoDB code should be much more better visible now (at least I'm

expecting ;-))

But before to deploy on it any of MySQL test

workloads, I want to understand the I/O limits I'm hitting on the lower

levels (if any) - first on the card itself, and then on the filesystem

levels..

NOTE : in fact I'm not interested here in the

best possible "tuning" or "configuring" of the Fusion-io card itself --

I'm mainly interested in the any possible regression on the I/O

performance due adding other operational levels, and in the current

article my main concern is about a filesystem. The only thing I'm sure

in the current step is to not use CFQ I/O scheduler (see previous

results), but rather NOOP or DEADLINE instead ("deadline" was used

within all the following tests).

As in the previous I/O testing,

all the following test were made with IObench_v5 tool. The server I'm

using has 24cores (Intel Xeon E7530 @1.87GHz), 64GB RAM, running Oracle

Linux 6.2. From the filesystems In the current testing I'll compare only

two: EXT4 and XFS. EXT4 is claiming to have a lot of performance

improvements made over the past time, while XFS was the most popular

until now in the MySQL world (while in the recent tests made by Percona

I was surprised to see EXT4 too, and some other users are claiming to

observe a better performance with other FS too.. - the problem is that I

also have a limited time to satisfy my curiosity, that's why there are

only two filesystems tested for the moment, but you'll see it was

already enough ;-))

Then, regarding my I/O tests:

- I'm testing here probably the most worse case ;-)

- the worst case is when you have just one big data file within your RDBMS which become very hot in access..

- so for a "raw device" it'll be a 128GB raw segment

- while for a filesystem I'll use a single 128GB file (twice bigger than the available RAM)

- and of course all the I/O requests are completely random.. - yes, the worse scenario ;-)

- so I'm using the following workload scenarios: Random-Read (Rrnd), Random-Writes (Wrnd), Random-Read+Write (RWrnd)

- same series of test is executed first executed with I/O block size = 4KB (most common for SSD), then 16KB (InnoDB block size)

- the load is growing with 1, 4, 16, 64, 128, 256 concurrent IObench processes

-

for filesystem file acces options the following is tested:

- O_DIRECT (Direct) -- similar to InnoDB when files opened with O_DIRECT option

- fsync() -- similar to InnoDB default when fsync() is called after each write() on a given file descriptor

- both filesystems are mounted with the following options: noatime,nodiratime,nobarrier

Let's start with raw devices first.

RAW Device

By the very first view, I was pretty impressed by the Fusion-io card I've got in my hands: 0.1ms latency on an I/O operation is really good (other SSD drives that I have on the same server are showing 0.3 ms for ex.). However thing may be changes when the I/O load become more heavy..

Let's get a look on the Random-Read:

Random-Read, bs= 4K/16K :

Observations :

- left part of the graphs representing I/O levels with block size of 4K, and the right one - with 16K

- the first graph is representing I/O operations/sec seen by the application itself (IObench), while the second graph is representing the KBytes/sec traffic observed by OS on the storage device (currently Fusion-io card is used only)

- as you can see, with 4K block size we're out-passing 100K Reads/sec (and in peak even reaching 120K), and keeping 80K Reads/sec on a higher load (128, 256 parallel I/O requests)

- while with 16K the max I/O level is around of 35K Reads/sec, and it's kept less or more stable with a higher load too

- from the KB/s graph: seems with the 500MB/sec speed we're not far from the max I/O Random-Read level on this configuration..

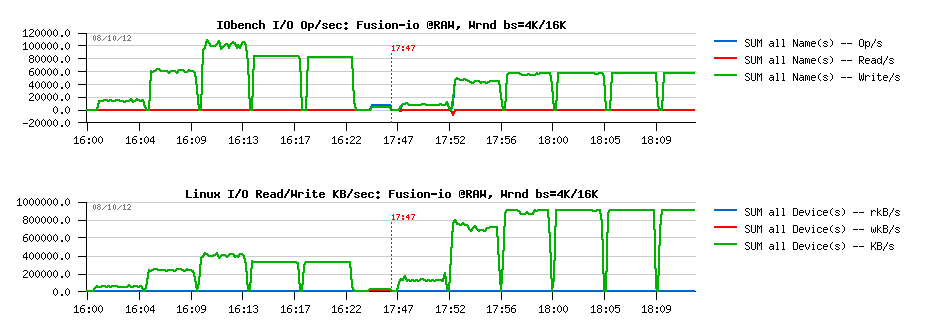

Random-Write, bs= 4K/16K :

Observations :

- very similar to Random-Reads, but on 16K block size showing a twice better performance (60K Reads/s), while 100K is the peak on 4K

- and the max I/O Write KB/s level seems to be near 900MB/sec

- pretty impressive ;-)

Random-RW, bs= 4K/16K :

Observations :

- very interesting results: in this test case performance is constantly growing with a growing load!

- ~80K I/O Operations/sec (Random RW) for 4K block size, and 60K for 16K

- max I/O Level in throughput is near 1GB/sec..

Ok, so let's summarize now the max levels :

- Rrnd: 100K for 4K, 35K for 16K

- Wrnd: 100K for 4K, 60K for 16K

- RWrnd: 78K for 4K, 60K for 16K

So, what will change now once a filesystem level is added to the storage??..

EXT4

Random-Read, O_DIRECT, bs= 4K/16K :

Observations :

- while 30K Reads/sec are well present on 16K block size, we're yet very far from 100K max obtained with 4K on raw device..

- 500MB/s level is well reached on 16K, not on 4K..

- the FS block size is also 4K, and it's strange to see a regression from 100K to 70K Reads/sec on 4K block size..

While for Random-Read access it doesn't make sense to test "fsync() case" (the data will be fully or partially cached by the filesystem), but for Random-Write and Random-RW it'll be pretty important. So, that's why there are 4 cases represented on each graph containing Write test:

- O_DIRECT with 4K block size

- fsync() with 4K block size

- O_DIRECT with 16K block size

- fsync() with 16K block size

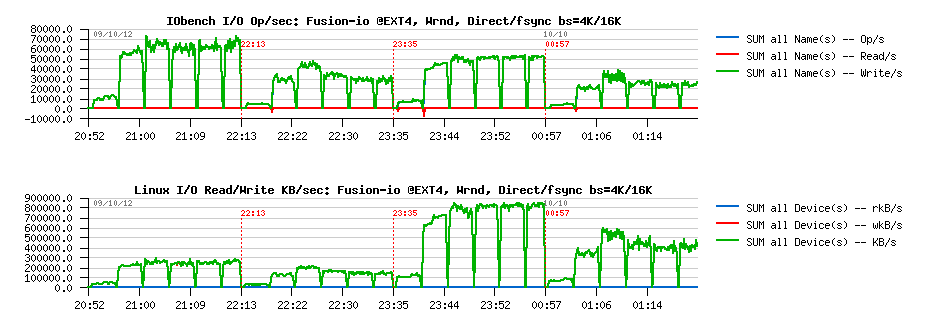

Random-Write, O_DIRECT/fsync bs= 4K/16K :

Observations :

- EXT4 performance here is very surprising..

- 15K Writes/s max on O_DIRECT with 4K, and 10K with 16K (instead of 100K / 60K observed on raw device)..

- fsync() test results are looking better, but still very poor comparing to the real storage capacity..

- in my previous tests I've observed the same tendency: O_DIRECT on EXT4 was slower than write()+fsync()

Looks like internal serialization is still taking place within EXT4. And the profiling output to compare why there is no performance increase on going from 4 I/O processes to 16 is giving the following:

EXT4, 4proc, 4KB, Wrnd: 12K-14K writes/sec :

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

1979.00 14.3% intel_idle [kernel]

1530.00 11.1% __ticket_spin_lock [kernel]

1024.00 7.4% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

696.00 5.0% native_write_cr0 [kernel]

513.00 3.7% ifio_strerror [iomemory_vsl]

482.00 3.5% native_write_msr_safe [kernel]

444.00 3.2% kfio_destroy_disk [iomemory_vsl]

...

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

844.00 12.2% intel_idle [kernel]

677.00 9.8% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

472.00 6.9% __ticket_spin_lock [kernel]

264.00 3.8% ifio_strerror [iomemory_vsl]

254.00 3.7% native_write_msr_safe [kernel]

250.00 3.6% kfio_destroy_disk [iomemory_vsl]

EXT4, 16proc, 4KB, Wrnd: 12K-14K writes/sec :

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

1600.00 16.1% intel_idle [kernel]

820.00 8.3% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

639.00 6.4% __ticket_spin_lock [kernel]

543.00 5.5% native_write_cr0 [kernel]

358.00 3.6% kfio_destroy_disk [iomemory_vsl]

351.00 3.5% ifio_strerror [iomemory_vsl]

343.00 3.5% native_write_msr_safe [kernel]

Looks like there is no difference between two cases, and EXT4 is just going on its own speed.

Random-RW, O_DIRECT/fsync, bs= 4K/16K :

Observations :

- same situation on RWrnd too..

- write()+fsync() performs better than O_DIRECT

- performance is far from "raw device" levels..

XFS

Random-Read O_DIRECT bs= 4K/16K :

Observations :

- Rrnd on XFS O_DIRECT is pretty not far from raw device performance

- on 16K block size there seems to be a random issue (performance did not increase on the beginning, then jumped to 30K Reads.sec -- as the grow up happen in the middle of a test case (64 processes), it makes me thing the issue is random..

However, the Wrnd results on XFS is a completely different story:

Random-Write O_DIRECT/fsync bs= 4K/16K :

Observations :

- well, as I've observed on my previous tests, O_DIRECT is faster on XFS vs write()+fsync()..

- however, the most strange is looking a jump on 4 concurrent I/O processes following by a full regression since the number of processes become 16..

- and then a complete performance regression.. (giving impression that no more than 4 concurrent writes are allowed on a single file.. - hard to believe, but there is for sure something is going odd ;-))

From the profiler output looking on the difference between 4 and 16 I/O processes we may see that XFS is hitting a huge lock contention where the code is spinning around the lock and the __ticket_spin_lock() function become the top hot on CPU time:

XFS, 4proc, 4KB, Wrnd: ~40K writes/sec :

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

3205.00 11.3% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

2217.00 7.8% __ticket_spin_lock [kernel]

2105.00 7.4% intel_idle [kernel]

1288.00 4.6% kfio_destroy_disk [iomemory_vsl]

1092.00 3.9% ifio_strerror [iomemory_vsl]

857.00 3.0% ifio_03dd6.e91899f4801ca56ff1d79005957a9c0b93c.3.1.5.126 [iomemory_vsl]

694.00 2.5% native_write_msr_safe [kernel]

.....

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

5022.00 10.7% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

4166.00 8.9% intel_idle [kernel]

3298.00 7.0% __ticket_spin_lock [kernel]

1938.00 4.1% kfio_destroy_disk [iomemory_vsl]

1378.00 2.9% native_write_msr_safe [kernel]

1323.00 2.8% ifio_strerror [iomemory_vsl]

1210.00 2.6% ifio_03dd6.e91899f4801ca56ff1d79005957a9c0b93c.3.1.5.126 [iomemory_vsl]

XFS, 16proc, 4KB, Wrnd: 12K writes/sec :

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

96576.00 56.8% __ticket_spin_lock [kernel]

17935.00 10.5% intel_idle [kernel]

6000.00 3.5% native_write_msr_safe [kernel]

5182.00 3.0% find_busiest_group [kernel]

2325.00 1.4% native_write_cr0 [kernel]

2239.00 1.3% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

2052.00 1.2% __schedule [kernel]

972.00 0.6% cpumask_next_and [kernel]

958.00 0.6% kfio_destroy_disk [iomemory_vsl]

952.00 0.6% find_next_bit [kernel]

898.00 0.5% load_balance [kernel]

705.00 0.4% ifio_strerror [iomemory_vsl]

679.00 0.4% ifio_03dd6.e91899f4801ca56ff1d79005957a9c0b93c.3.1.5.126 [iomemory_vsl]

666.00 0.4% __ticket_spin_unlock [kernel]

I did not find any info if there is any way to tune or to limit spin locks around XFS (while it can be on some kernel level as well, and not be related to XFS..)

And situations with RWrnd is not too much different:

Random-RW O_DIRECT/fsync bs= 4K/16K :

Observations :

- O_DIRECT is still better on XFS vs write()+sync()

- RWrnd performance is far from the storage capacities, and at least observed on a raw device

So, looking on all these EXT4 and XFS test results -- it's clear that if in MySQL/InnoDB you have OLTP RW workload which mostly hot on a one particular table (means a single data file if table has no partitions), then regardless all internal contentions you'll already need to resolve within MySQL/InnoDB code, there will be yet a huge limitation coming on the I/O level from the filesystem layer!..

Looks like having a hot access on a single data file should be avoid whenever possible ;-)

TEST with 8 data files

Let's see now if instead of one single 128GB data file, the load will be distributed between 8 files, 16GB in size each. Don't think any comments are needing for the following test results.

You'll see that:

- having 8 files brings FS performance very close the the RAW device level

- XFS is still performing better than EXT4

- having O_DIRECT gives a better results than write()+fsync()

EXT4

Random-Read O_DIRECT bs= 4K/16K :

Random-Write O_DIRECT/fsync bs= 4K/16K :

Random-RW O_DIRECT/fsync bs= 4K/16K :

XFS

Random-Read O_DIRECT bs= 4K/16K :

Random-Write O_DIRECT/fsync bs= 4K/16K :

Random-RW O_DIRECT/fsync bs= 4K/16K :

IMPACT of data file numbers

With 8 data files we're reaching very closely the RAW device performance on write I/O operations, and O_DIRECT option seems to be the must for both EXT4 and XFS filesystems. Let's see now if performance is already better with 2 or 4 data files.

EXT4 : Random-Write O_DIRECT bs=4K data files= 1/ 2/ 4

Observations :

- confirming once more a true serialization on a file access: each result is near twice as better as the previous one without any difference in results with a growing number of concurrent I/O processes..

- so, nothing surprising performance is yet better with 8 data files

EXT4 : Random-Write O_DIRECT bs=16K data files= 1/ 2/ 4

Observations :

- same tendency as with 4K block size

XFS : Random-Write O_DIRECT bs=4K data files= 1/ 2/ 4

Observations :

- only since 4 data files there is no more performance drop since 64 concurrent I/O processes..

- and having 4 files is still not enough to reach RAW performance of the same storage device here

- while it's way better than EXT4..

XFS : Random-Write O_DIRECT bs=16K data files= 1/ 2/ 4

Observations :

- for 16K block size having 4 data files becomes enough

- on 2 files there is a strange jump on 256 concurrent processes..

- but well, with 4 files it looks pretty similar to 8, and seems to be the minimal number of hot files to have to reach RAW performance..

- and near x1.5 times better performance than EXT4 too..

INSTEAD OF SUMMARY

Seems to reach the max I/O performance from your MySQL/InnoDB database on a flash storage you have to check for the following:

-

your data are placed on XFS filesystem (mounted with

"noatime,nodiratime,nobarrier" options) and your storage device is

managed by "noop" or "deadline" I/O scheduler (see previous tests for

details)

-

you're using O_DIRECT within your InnoDB config (don't know yet if

using 4K page size will really bring some improvement over 16K as

there will be x4 times more pages to manage within the same memory

space, which may require x4 times more lock events and other

overheads.. - while in term of Writes/sec potential performance the

difference is not so big! - from the presented test results in most

cases it's only 80K vs 60K writes/sec -- but of course a real result

from a real database workload will be better ;-))

- and, finally, be sure your write activity is not focused on a single data file! - they should at last be more or equal than 4 to be sure your performance is not lowered from the beginning by the filesystem layer!

To be continued...

Any comments are welcome!

blog comments powered by DisqusNote: if you don't see any "comment" dialog above, try to access this page with another web browser, or google for known issues on your browser and DISQUS..