« Tools/ dbSTRESS | Main | x-files... »

Monday, 05 November, 2012

MySQL Performance: Linux I/O and Fusion-IO, Part #2

This post is the following part #2 of the previous

one - in fact Vadim's comments bring me in some doubts about the

possible radical difference in implementation of AIO vs normal I/O in

Linux and filesystems. As well I've never used Sysbench for I/O testing

until now, and was curious to see it in action. From the previous tests

the main suspect point was about random writes (Wrnd) performance on a

single data file, so I'm focusing only on this case within the following

tests. On XFS performance issues started since 16 concurrent IO write

processes, so I'm limiting the test cases only to 1, 2, 4, 8 and 16

concurrent write threads (Sysbench is multi-threaded), and for AIO

writes seems 2 or 4 write threads may be more than enough as each thread

by default is managing 128 AIO write requests..

Few words about

Sysbench "fileio" test options :

- As already mentioned, it's multithreaded, so all the following tests were executed with 1, 2, 4, 8, 16 threads

- Single 128GB data file is used for all workloads

- Random write is used as workload option ("rndwr")

- It has "sync" and "async" mode options for file I/O, and optional "direct" flag to use O_DIRECT

- For "async" there is also a "backlog" parameter to say how many AIO requests should be managed by a single thread (default is 128, and what is InnoDB is using too)

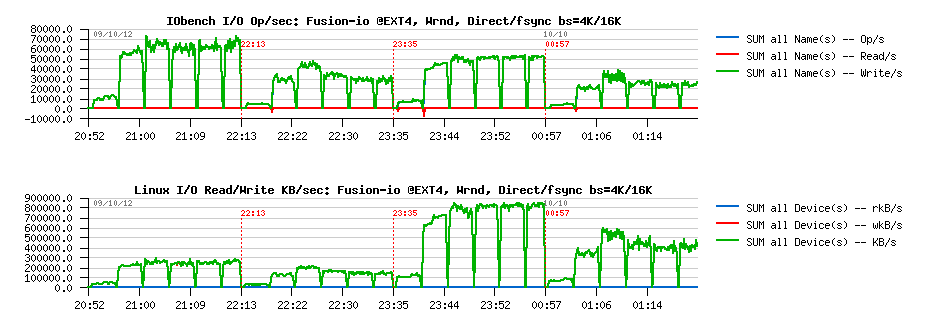

So, lets try with "sync" + "direct" random writes first, just to check if I will observe the same things as in my previous tests with IObench before:

Sync I/O

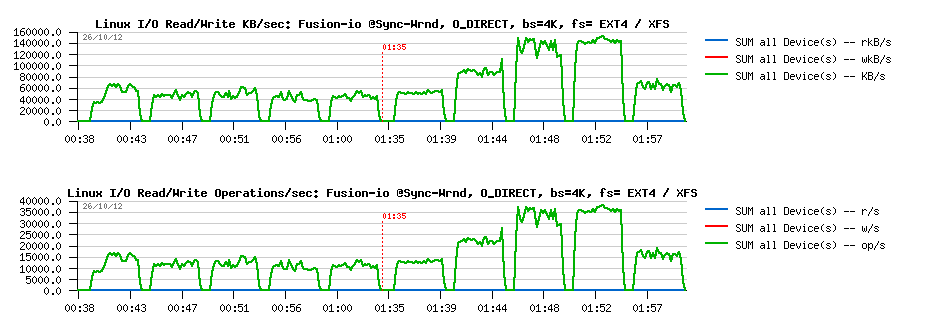

Wrnd "sync"+"direct" with 4K block size:

Observations :

- Ok, the result is looking very similar to before:

- EXT4 is blocked on the same write level for any number of concurrent threads (due IO serialization)

- while XFS is performing more than x2 times better, but getting a huge drop since 16 concurrent threads..

Wrnd "sync"+"direct" with 16K block size :

Observations :

- Same here, except that the difference in performance is reaching x4 times better result for XFS

- And similar drop since 16 threads..

However, things are changing radically when AIO is used ("async" instead of "sync").

Async I/O

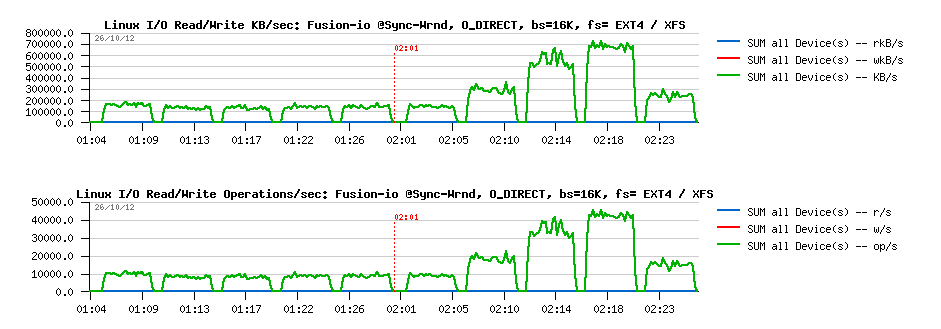

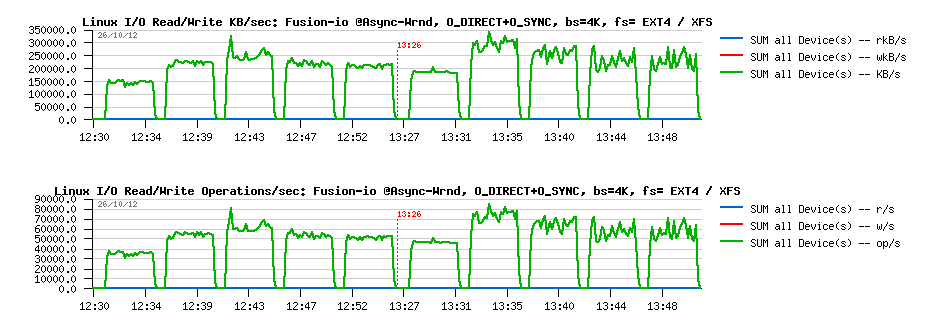

Wrnd "async"+"direct" with 4K block size:

Observations :

- Max write performance is pretty the same for both file systems

- While EXT4 remains stable on all threads levels, and XFS is hitting a regression since 4 threads..

- Not too far from the RAW device performance observed before..

Wrnd "async"+"direct" with 16K block size:

Observations :

- Pretty similar as with 4K results, except that regression on XFS is starting since 8 threads now..

- Both are not far now from the RAW device performance observed in previous tests

From all points of view, AIO write performance is looking way better! While I'm still surprised by a so spectacular transformation of EXT4.. - I have some doubts here if something within I/O processing is still not buffered within EXT4, even if the O_DIRECT flag is used. And if we'll read Linux doc about O_DIRECT implementation, we may see that O_SYNC should be used in addition to O_DIRECT to guarantee the synchronous write:

" O_DIRECT (Since Linux 2.4.10)Try to minimize cache effects of the I/O to and from this file. Ingeneral this will degrade performance, but it is useful in specialsituations, such as when applications do their own caching. File I/Ois done directly to/from user space buffers. The O_DIRECT flag on itsown makes at an effort to transfer data synchronously, but does notgive the guarantees of the O_SYNC that data and necessary metadata aretransferred. To guarantee synchronous I/O the O_SYNC must be used inaddition to O_DIRECT. See NOTES below for further discussion. "

(ref: http://www.kernel.org/doc/man-pages/online/pages/man2/open.2.html)

Sysbench is not opening file with O_SYNC when O_DIRECT is used ("direct" flag), so I've modified modified Sysbench code to get these changes, and then obtained the following results:

Async I/O : O_DIRECT + O_SYNC

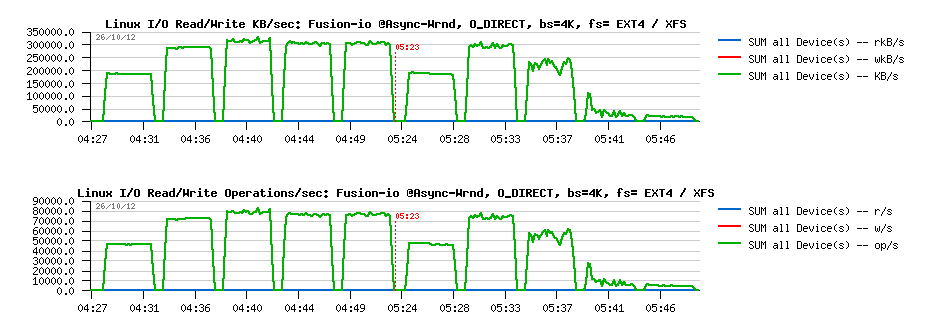

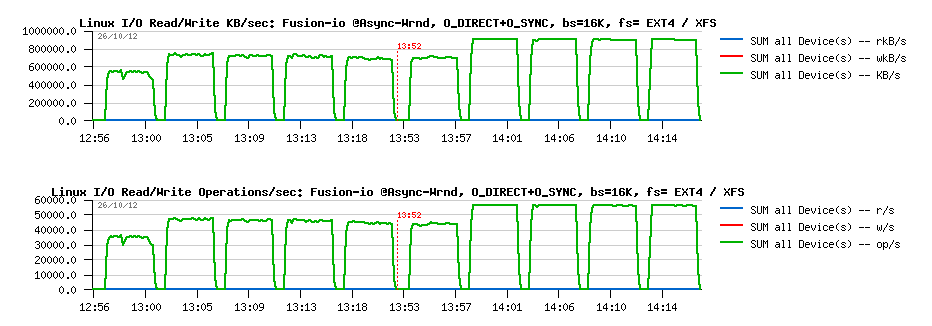

Wrnd "async"+"direct"+O_SYNC with 4K block size:

Observations :

- EXT4 performance become lower.. - 25% a cost for O_SYNC, hmm..

- while XFS surprisingly become more stable and don't have a huge drop observed before..

- as well, XFS is out performing EXT4 here, while we may still expect some better stability in results..

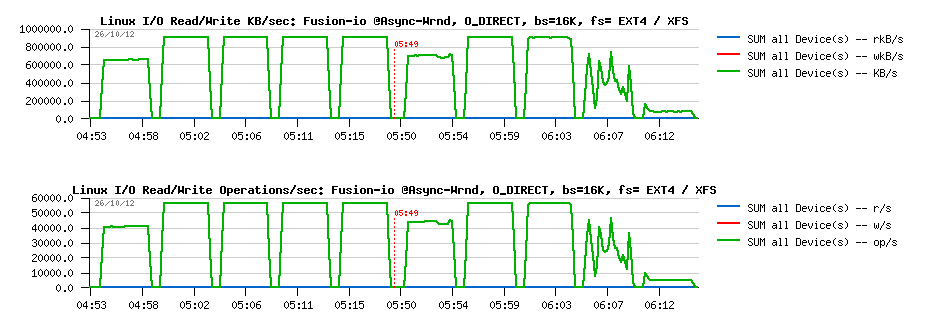

Wrnd "async"+"direct"+O_SYNC with 16K block size:

Observations :

- while with 16K block size, both filesystems showing rock stable performance levels

- but XFS is doing better here (over 15% better performance), and reaching its max performance without O_SYNC

I'm pretty curious what kind of changes are going within XFS code path when O_SYNC is used in AIO and why it "fixed" initially observed drops.. But seems to me for security reasons O_DIRECT should be used along with O_SYNC within InnoDB (and looking in the source code, seems it's not yet the case, or we should add something like O_DIRECT_SYNC for users who are willing to be more safe with Linux writes, similar to O_DIRECT_NO_FSYNC introduced in MySQL 5.6 for users who are not willing to enforce writes with additional fsync() calls)..

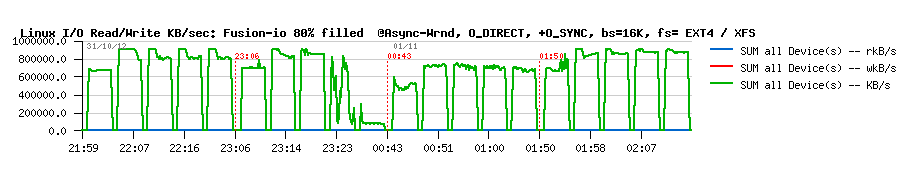

And at the end, specially for Mark Callaghan, a short graph with results on the same tests with 16K block size, but while the filesystem space is filled up to 80% (850GB from the whole 1TB space in Fusion-io flash card):

Wrnd AIO with 16K block size while 80% of space is filled :

So, there is some regression on every test, indeed.. - but maybe not as big as we should maybe afraid. I've also tested the same with TRIM mount option, but did not get better. But well, to see these 10% regression we should yet see if MySQL/InnoDB will be able to reach these performance levels first ;-))

Time to a pure MySQL/InnoDB heavy RW test now..

Tuesday, 23 October, 2012

MySQL Performance: Linux I/O and Fusion-io

This article is following the previously

published investigation about I/O limitations on Linux and also

sharing my data from the steps in investigation of MySQL/InnoDB I/O

limitations within RW workloads..

So far, I've got in my hands a

server with a Fusion-io card and I'm expecting now to analyze more in

details the limits we're hitting within MySQL and InnoDB on heavy

Read+Write workloads. As the I/O limit from the HW level should be way

far due outstanding Fusion-io card performance, contentions within

MySQL/InnoDB code should be much more better visible now (at least I'm

expecting ;-))

But before to deploy on it any of MySQL test

workloads, I want to understand the I/O limits I'm hitting on the lower

levels (if any) - first on the card itself, and then on the filesystem

levels..

NOTE : in fact I'm not interested here in the

best possible "tuning" or "configuring" of the Fusion-io card itself --

I'm mainly interested in the any possible regression on the I/O

performance due adding other operational levels, and in the current

article my main concern is about a filesystem. The only thing I'm sure

in the current step is to not use CFQ I/O scheduler (see previous

results), but rather NOOP or DEADLINE instead ("deadline" was used

within all the following tests).

As in the previous I/O testing,

all the following test were made with IObench_v5 tool. The server I'm

using has 24cores (Intel Xeon E7530 @1.87GHz), 64GB RAM, running Oracle

Linux 6.2. From the filesystems In the current testing I'll compare only

two: EXT4 and XFS. EXT4 is claiming to have a lot of performance

improvements made over the past time, while XFS was the most popular

until now in the MySQL world (while in the recent tests made by Percona

I was surprised to see EXT4 too, and some other users are claiming to

observe a better performance with other FS too.. - the problem is that I

also have a limited time to satisfy my curiosity, that's why there are

only two filesystems tested for the moment, but you'll see it was

already enough ;-))

Then, regarding my I/O tests:

- I'm testing here probably the most worse case ;-)

- the worst case is when you have just one big data file within your RDBMS which become very hot in access..

- so for a "raw device" it'll be a 128GB raw segment

- while for a filesystem I'll use a single 128GB file (twice bigger than the available RAM)

- and of course all the I/O requests are completely random.. - yes, the worse scenario ;-)

- so I'm using the following workload scenarios: Random-Read (Rrnd), Random-Writes (Wrnd), Random-Read+Write (RWrnd)

- same series of test is executed first executed with I/O block size = 4KB (most common for SSD), then 16KB (InnoDB block size)

- the load is growing with 1, 4, 16, 64, 128, 256 concurrent IObench processes

-

for filesystem file acces options the following is tested:

- O_DIRECT (Direct) -- similar to InnoDB when files opened with O_DIRECT option

- fsync() -- similar to InnoDB default when fsync() is called after each write() on a given file descriptor

- both filesystems are mounted with the following options: noatime,nodiratime,nobarrier

Let's start with raw devices first.

RAW Device

By the very first view, I was pretty impressed by the Fusion-io card I've got in my hands: 0.1ms latency on an I/O operation is really good (other SSD drives that I have on the same server are showing 0.3 ms for ex.). However thing may be changes when the I/O load become more heavy..

Let's get a look on the Random-Read:

Random-Read, bs= 4K/16K :

Observations :

- left part of the graphs representing I/O levels with block size of 4K, and the right one - with 16K

- the first graph is representing I/O operations/sec seen by the application itself (IObench), while the second graph is representing the KBytes/sec traffic observed by OS on the storage device (currently Fusion-io card is used only)

- as you can see, with 4K block size we're out-passing 100K Reads/sec (and in peak even reaching 120K), and keeping 80K Reads/sec on a higher load (128, 256 parallel I/O requests)

- while with 16K the max I/O level is around of 35K Reads/sec, and it's kept less or more stable with a higher load too

- from the KB/s graph: seems with the 500MB/sec speed we're not far from the max I/O Random-Read level on this configuration..

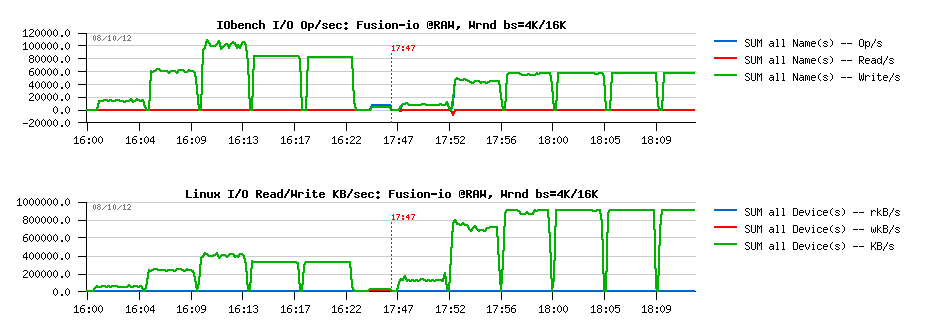

Random-Write, bs= 4K/16K :

Observations :

- very similar to Random-Reads, but on 16K block size showing a twice better performance (60K Reads/s), while 100K is the peak on 4K

- and the max I/O Write KB/s level seems to be near 900MB/sec

- pretty impressive ;-)

Random-RW, bs= 4K/16K :

Observations :

- very interesting results: in this test case performance is constantly growing with a growing load!

- ~80K I/O Operations/sec (Random RW) for 4K block size, and 60K for 16K

- max I/O Level in throughput is near 1GB/sec..

Ok, so let's summarize now the max levels :

- Rrnd: 100K for 4K, 35K for 16K

- Wrnd: 100K for 4K, 60K for 16K

- RWrnd: 78K for 4K, 60K for 16K

So, what will change now once a filesystem level is added to the storage??..

EXT4

Random-Read, O_DIRECT, bs= 4K/16K :

Observations :

- while 30K Reads/sec are well present on 16K block size, we're yet very far from 100K max obtained with 4K on raw device..

- 500MB/s level is well reached on 16K, not on 4K..

- the FS block size is also 4K, and it's strange to see a regression from 100K to 70K Reads/sec on 4K block size..

While for Random-Read access it doesn't make sense to test "fsync() case" (the data will be fully or partially cached by the filesystem), but for Random-Write and Random-RW it'll be pretty important. So, that's why there are 4 cases represented on each graph containing Write test:

- O_DIRECT with 4K block size

- fsync() with 4K block size

- O_DIRECT with 16K block size

- fsync() with 16K block size

Random-Write, O_DIRECT/fsync bs= 4K/16K :

Observations :

- EXT4 performance here is very surprising..

- 15K Writes/s max on O_DIRECT with 4K, and 10K with 16K (instead of 100K / 60K observed on raw device)..

- fsync() test results are looking better, but still very poor comparing to the real storage capacity..

- in my previous tests I've observed the same tendency: O_DIRECT on EXT4 was slower than write()+fsync()

Looks like internal serialization is still taking place within EXT4. And the profiling output to compare why there is no performance increase on going from 4 I/O processes to 16 is giving the following:

EXT4, 4proc, 4KB, Wrnd: 12K-14K writes/sec :

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

1979.00 14.3% intel_idle [kernel]

1530.00 11.1% __ticket_spin_lock [kernel]

1024.00 7.4% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

696.00 5.0% native_write_cr0 [kernel]

513.00 3.7% ifio_strerror [iomemory_vsl]

482.00 3.5% native_write_msr_safe [kernel]

444.00 3.2% kfio_destroy_disk [iomemory_vsl]

...

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

844.00 12.2% intel_idle [kernel]

677.00 9.8% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

472.00 6.9% __ticket_spin_lock [kernel]

264.00 3.8% ifio_strerror [iomemory_vsl]

254.00 3.7% native_write_msr_safe [kernel]

250.00 3.6% kfio_destroy_disk [iomemory_vsl]

EXT4, 16proc, 4KB, Wrnd: 12K-14K writes/sec :

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

1600.00 16.1% intel_idle [kernel]

820.00 8.3% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

639.00 6.4% __ticket_spin_lock [kernel]

543.00 5.5% native_write_cr0 [kernel]

358.00 3.6% kfio_destroy_disk [iomemory_vsl]

351.00 3.5% ifio_strerror [iomemory_vsl]

343.00 3.5% native_write_msr_safe [kernel]

Looks like there is no difference between two cases, and EXT4 is just going on its own speed.

Random-RW, O_DIRECT/fsync, bs= 4K/16K :

Observations :

- same situation on RWrnd too..

- write()+fsync() performs better than O_DIRECT

- performance is far from "raw device" levels..

XFS

Random-Read O_DIRECT bs= 4K/16K :

Observations :

- Rrnd on XFS O_DIRECT is pretty not far from raw device performance

- on 16K block size there seems to be a random issue (performance did not increase on the beginning, then jumped to 30K Reads.sec -- as the grow up happen in the middle of a test case (64 processes), it makes me thing the issue is random..

However, the Wrnd results on XFS is a completely different story:

Random-Write O_DIRECT/fsync bs= 4K/16K :

Observations :

- well, as I've observed on my previous tests, O_DIRECT is faster on XFS vs write()+fsync()..

- however, the most strange is looking a jump on 4 concurrent I/O processes following by a full regression since the number of processes become 16..

- and then a complete performance regression.. (giving impression that no more than 4 concurrent writes are allowed on a single file.. - hard to believe, but there is for sure something is going odd ;-))

From the profiler output looking on the difference between 4 and 16 I/O processes we may see that XFS is hitting a huge lock contention where the code is spinning around the lock and the __ticket_spin_lock() function become the top hot on CPU time:

XFS, 4proc, 4KB, Wrnd: ~40K writes/sec :

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

3205.00 11.3% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

2217.00 7.8% __ticket_spin_lock [kernel]

2105.00 7.4% intel_idle [kernel]

1288.00 4.6% kfio_destroy_disk [iomemory_vsl]

1092.00 3.9% ifio_strerror [iomemory_vsl]

857.00 3.0% ifio_03dd6.e91899f4801ca56ff1d79005957a9c0b93c.3.1.5.126 [iomemory_vsl]

694.00 2.5% native_write_msr_safe [kernel]

.....

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

5022.00 10.7% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

4166.00 8.9% intel_idle [kernel]

3298.00 7.0% __ticket_spin_lock [kernel]

1938.00 4.1% kfio_destroy_disk [iomemory_vsl]

1378.00 2.9% native_write_msr_safe [kernel]

1323.00 2.8% ifio_strerror [iomemory_vsl]

1210.00 2.6% ifio_03dd6.e91899f4801ca56ff1d79005957a9c0b93c.3.1.5.126 [iomemory_vsl]

XFS, 16proc, 4KB, Wrnd: 12K writes/sec :

samples pcnt function DSO

_______ _____ ___________________________________________________________________ ______________

96576.00 56.8% __ticket_spin_lock [kernel]

17935.00 10.5% intel_idle [kernel]

6000.00 3.5% native_write_msr_safe [kernel]

5182.00 3.0% find_busiest_group [kernel]

2325.00 1.4% native_write_cr0 [kernel]

2239.00 1.3% ifio_8f406.db9914f4ba64991d41d2470250b4d3fb4c6.3.1.5.126 [iomemory_vsl]

2052.00 1.2% __schedule [kernel]

972.00 0.6% cpumask_next_and [kernel]

958.00 0.6% kfio_destroy_disk [iomemory_vsl]

952.00 0.6% find_next_bit [kernel]

898.00 0.5% load_balance [kernel]

705.00 0.4% ifio_strerror [iomemory_vsl]

679.00 0.4% ifio_03dd6.e91899f4801ca56ff1d79005957a9c0b93c.3.1.5.126 [iomemory_vsl]

666.00 0.4% __ticket_spin_unlock [kernel]

I did not find any info if there is any way to tune or to limit spin locks around XFS (while it can be on some kernel level as well, and not be related to XFS..)

And situations with RWrnd is not too much different:

Random-RW O_DIRECT/fsync bs= 4K/16K :

Observations :

- O_DIRECT is still better on XFS vs write()+sync()

- RWrnd performance is far from the storage capacities, and at least observed on a raw device

So, looking on all these EXT4 and XFS test results -- it's clear that if in MySQL/InnoDB you have OLTP RW workload which mostly hot on a one particular table (means a single data file if table has no partitions), then regardless all internal contentions you'll already need to resolve within MySQL/InnoDB code, there will be yet a huge limitation coming on the I/O level from the filesystem layer!..

Looks like having a hot access on a single data file should be avoid whenever possible ;-)

TEST with 8 data files

Let's see now if instead of one single 128GB data file, the load will be distributed between 8 files, 16GB in size each. Don't think any comments are needing for the following test results.

You'll see that:

- having 8 files brings FS performance very close the the RAW device level

- XFS is still performing better than EXT4

- having O_DIRECT gives a better results than write()+fsync()

EXT4

Random-Read O_DIRECT bs= 4K/16K :

Random-Write O_DIRECT/fsync bs= 4K/16K :

Random-RW O_DIRECT/fsync bs= 4K/16K :

XFS

Random-Read O_DIRECT bs= 4K/16K :

Random-Write O_DIRECT/fsync bs= 4K/16K :

Random-RW O_DIRECT/fsync bs= 4K/16K :

IMPACT of data file numbers

With 8 data files we're reaching very closely the RAW device performance on write I/O operations, and O_DIRECT option seems to be the must for both EXT4 and XFS filesystems. Let's see now if performance is already better with 2 or 4 data files.

EXT4 : Random-Write O_DIRECT bs=4K data files= 1/ 2/ 4

Observations :

- confirming once more a true serialization on a file access: each result is near twice as better as the previous one without any difference in results with a growing number of concurrent I/O processes..

- so, nothing surprising performance is yet better with 8 data files

EXT4 : Random-Write O_DIRECT bs=16K data files= 1/ 2/ 4

Observations :

- same tendency as with 4K block size

XFS : Random-Write O_DIRECT bs=4K data files= 1/ 2/ 4

Observations :

- only since 4 data files there is no more performance drop since 64 concurrent I/O processes..

- and having 4 files is still not enough to reach RAW performance of the same storage device here

- while it's way better than EXT4..

XFS : Random-Write O_DIRECT bs=16K data files= 1/ 2/ 4

Observations :

- for 16K block size having 4 data files becomes enough

- on 2 files there is a strange jump on 256 concurrent processes..

- but well, with 4 files it looks pretty similar to 8, and seems to be the minimal number of hot files to have to reach RAW performance..

- and near x1.5 times better performance than EXT4 too..

INSTEAD OF SUMMARY

Seems to reach the max I/O performance from your MySQL/InnoDB database on a flash storage you have to check for the following:

-

your data are placed on XFS filesystem (mounted with

"noatime,nodiratime,nobarrier" options) and your storage device is

managed by "noop" or "deadline" I/O scheduler (see previous tests for

details)

-

you're using O_DIRECT within your InnoDB config (don't know yet if

using 4K page size will really bring some improvement over 16K as

there will be x4 times more pages to manage within the same memory

space, which may require x4 times more lock events and other

overheads.. - while in term of Writes/sec potential performance the

difference is not so big! - from the presented test results in most

cases it's only 80K vs 60K writes/sec -- but of course a real result

from a real database workload will be better ;-))

- and, finally, be sure your write activity is not focused on a single data file! - they should at last be more or equal than 4 to be sure your performance is not lowered from the beginning by the filesystem layer!

To be continued...

Any comments are welcome!

Friday, 06 January, 2012

MySQL Performance: Linux I/O

It was a long time now that I wanted to run some benchmark tests to

understand better the surprises I've met in the past with Linux I/O

performance during MySQL benchmarks, and finally it happened last year,

but I was able to organize and present my results only now..

My

main questions were:

- what is so different with various I/O schedulers in Linux (cfq, noop, deadline) ?..

- what is wrong or right with O_DIRECT on Linux ?..

- what is making XFS more attractive comparing to EXT3/EXT4 ?..

There were already several posts in the past about impact on MySQL performance when one or another Linux I/O layer feature was used (for ex. Domas about I/O schedulers, Vadim regarding TPCC-like performance, and many other) - but I still did not find any answer WHY (for ex.) cfq I/O scheduler is worse than noop, etc, etc..

So, I'd like to share here some answers to my WHY questions ;-))

(while for today I still have more questions than answers ;-))

Test Platform

First of all, the system I've used for my tests:

- HW server: 64 cores (Intel), 128GB RAM, running RHEL 5.5

- the kernel is 2.6.18 - as it was until now the most common Linux kernel used on Linux boxes hosting MySQL servers

- installed filesystems: ext3, ext4, XFS

- Storage: ST6140 (1TB on x16 HDD striped in RAID0, 4GB cache on controller) - not a monster, but fast enough to see if the bottleneck is coming from the storage level or not ;-))

Test Plan

Then, my initial test plan:

- see what the max possible Read/Write I/O performance I can obtain from the given HW on the raw level (just RAW-devices, without any filesystem, etc.) - mainly I'm interested here on the impact of Linux I/O scheduler

- then, based on observed results, setup more optimally each filesystem (ext3, ext4, XFS) and try to understand their bottlenecks..

- I/O workload: I'm mainly focusing here on the random reads and random writes - they are the most problematic for any I/O related performance (and particularly painful for databases), while sequential read/writes may be very well optimized on the HW level already and hide any other problems you have..

- Test Tool: I'm using here my IObench tool (at least I know exactly what it's doing ;-))

TESTING RAW DEVICES

Implementation of raw devices in Linux is quite surprising.. - it's simply involving O_DIRECT access to a block device. So to use a disk in raw mode you have to open() it with O_DIRECT option (or use "raw" command which will create an alias device in your system which will always use O_DIRECT flag on any involved open() system call). Using O_DIRECT flag on a file opening is disabling any I/O buffering on such a file (or device, as device is also a file in UNIX ;-) - NOTE: by default all I/O requests on block devices (e.g. hard disk) in Linux are buffered, so if you'll start a kind of I/O write test on, say, your /dev/sda1 - you'll obtain a kind of incredible great performance ;-)) as no data probably will not yet even reach your storage and in reality you'll simply test a speed of your RAM.. ;-))

Now, what is "fun" with O_DIRECT:

- all your I/O requests (read, write, etc.) block size should be aligned to 512 bytes (e.g. be multiplier of 512 bytes), otherwise your I/O request is simply rejected and you get an error message.. - and regarding to RAW devices it's quite surprising comparing to Solaris for ex. where you're simply instead of /dev/dsk/device using /dev/rdsk/device and may use any block size you want..

- but it's not all.. - the buffer you're using within your system call involving I/O request should also be allocated aligned to 512 bytes, so mainly you have to allocate it via posix_memalign() function, otherwise you'll also get an error.. (seems that during O_DIRECT operations there is used some kind of direct memory mapping)

- then, reading the manual: "The O_DIRECT flag on its own makes at an effort to transfer data synchronously, but does not give the guarantees of the O_SYNC that data and necessary metadata are transferred. To guarantee synchronous I/O the O_SYNC must be used in addition to O_DIRECT" - quite surprising again..

- and, finally, you'll be unable to use O_DIRECT within your C code until you did not declare #define _GNU_SOURCE

Interesting that the man page is also quoting Linus about O_DIRECT:

"The thing that has always disturbed me about O_DIRECT is that the whole interface is just stupid, and was probably designed by a deranged monkey on some serious mind-controlling substances." Linus

But we have to live with it ;-))

And if you need an example of C or C++ code, instead to show you the mine, there is a great dev page on Fusion-io site.

So far, what about my storage performance on the RAW devices now?..

Test scenario on RAW devices:

- I/O Schedulers: cfq, noop, deadline

- Block size: 1K, 4K, 16K

- Workload: Random Read, Random Write

NOTE: I'm using here 1K block size as the smallest "useful" size for databases :-)) then 4K as the most aligned to the Linux page size (4K), and 16K - as the default InnoDB block size until now.

Following graphs are representing 9 tests executed one after one: cfq with 3 different block sizes (1K, 4K, 16K), then noop, then deadline. Each test is running a growing workload of 1, 4, 16, 64 concurrent users (processes) non-stop bombarding my storage subsystem with I/O requests.

Read-Only@RAW-device:

Observations :

- Random Read is scaling well for all Linux I/O Schedulers

- Reads reported by application (IObench) are matching numbers reported by the system I/O stats

- 1K reads are running slightly faster than 4K (as expected as it's a "normal" disks, and transfer of a bigger data volume reducing an overall performance, which is normal)..

Write-Only @RAW-device:

Observations :

- looking on the graph you may easily understand now what is wrong with "cfq" I/O scheduler.. - it's serializing write operations!

- while "noop" and "deadline" are continuing to scale with a growing workload..

- so, it's clear now WHY performance gains were observed by many people on MySQL workloads by simply switching from "cfq" to "noop" or "deadline"

To check which I/O scheduler is used for your storage device:

# cat /sys/block/{DEVICE-NAME}/queue/scheduler

For ex. for "sda": # cat /sys/block/sda/queue/scheduler

Then set "deadline" for "sda": # echo deadline > /sys/block/sda/queue/scheduler

To set "deadline" as default I/ scheduler for all your storage devices you may boot your system with "elevator=deadline" boot option. Interesting that by default many Linux systems used "cfq". All recent Oracle Linux systems are shipped with "deadline" by default.

TESTING FILESYSTEMS

As you understand, there is no more reasons to continue any further tests by using "cfq" I/O scheduler.. - if on the raw level it's already bad, it cannot be better due any filesystem features ;-)) (While I was also told that in recent Linux kernels "cfq" I/O scheduler should perform much more better, let's see)..

Anyway, my filesystem test scenario:

- Linux I/O Scheduler: deadline

- Filesystems: ext3, ext4, XFS

- File flags/options: osync (O_SYNC), direct (O_DIRECT), fsync (fsync() is involved after each write()), fdatasync (same as fsync, but calling fdatasync() instead of fsync())

- Block size: 1k, 4K, 16K

- Workloads: Random Reads, Random Writes on a single 128GB file - it's the most critical file access for any database (having a hot table, or a hot tablespace)

- NOTE: to avoid most of background effects of caching, I've limited an available RAM for FS cache to 8GB only! (all other RAM was allocated to the huge SHM segment with huge pages, so not swappable)..

Also, we have to keep in mind now the highest I/O levels observed on RAW devices:

- Random Read: ~4500 op/sec

- Random Write: ~5000 op/sec

So, if for any reason Read or Write performance will be faster on any of filesystems - it'll be clear there is some buffering/caching happening on the SW level ;-))

Now, let me explain what you'll see on the following graphs:

- they are already too many, so I've tried to bring more data on each graph :-))

- there are 12 tests on each graph (x3 series of x4 tests)

- each serie of tests is executed by using the same block size (1K, then 4K, then 16K)

- within a serie of 4 tests there are 4 flags/options are used one after one (osync, direct, fsync, fdatasync)

- each test is executed as before with 1, 4, 16, 64 concurrent user processes (IObench)

- only one filesystem per graph :-))

So, let's start now with Read-Only results.

Read-Only @EXT3:

Observations :

- pretty well scaling, reaching 4500 reads/sec in max

- on 1K reads: only "direct" reads are really reading 1K blocks, all other options are involving reading of 4K blocks

- nothing unexpected finally :-)

Read-Only @EXT4:

Observations :

- same as on ext3, nothing unexpected

Read-Only @XFS:

Observations :

- no surprise here either..

- but there were one surprise anyway ;-))

While the results on Random Read workloads are looking exactly the same on all 3 filesystems, there are still some difference in how the O_DIRECT feature is implemented on them! ;-))

The following graphs are representing the same tests, but only corresponding to execution with O_DIRECT flag (direct). First 3 tests are with EXT3, then 3 with XFS, then 3 with EXT4:

Direct I/O & Direct I/O

Observations :

- the most important here the last graph showing here the memory usage on the system during O_DIRECT tests

- as you may see, only with XFS the filesystem cache usage is near zero!

- while EXT3 and EXT4 are still continuing cache buffering.. - may be a very painful surprise when you're expecting to use this RAM for something else ;-))

Well, let's see now what is different on the Write Performance.

Write-Only @EXT3:

Observations :

- the most worse performance here is with 1K blocks.. - as default EXT3 block size is 4K, on the 1K writes it involves a read-on-write (it has to read 4K block first, then change corresponding 1K on changes within it, and then write the 4K block back with applied changes..)

- read-on-write is not happening on 1K when O_DIRECT flag is used: we're really writing 1K here

- however, O_DIRECT writes are not scaling at all on EXT3! - and it explains me finally WHY I've always got a worse performance when tried to use O_DIRECT flush option in InnoDB on EXT3 filesystem! ;-))

- interesting that the highest performance here is obtained with O_SYNC flag, and we're not far from 5000 writes/sec for what the storage is capable..

Write-Only @EXT4:

Observations :

- similar to EXT3, but performance is worse comparing to EXT3

- interesting that only with O_SYNC flag the performance is comparable with EXT3, while in all other cases it's simply worse..

- I may suppose here that EXT3 is not flushing on every fsync() or fdatasync(), and that's why it's performing better with these options ;-)) need to investigate here.. But anyway, the result is the result..

What about XFS?..

Write-Only @XFS:

Observations :

- XFS results are quite different from those of EXT3 and EXT4

- I've used a default setup of XFS here, and was curios to not observe the impact of missed "nobarrier" option which was reported by Vadim in the past..

- on 1K block writes only O_DIRECT is working well, but in difference from EXT3/EXT4 it's also scaling ;-) (other options are giving poor results due the same read-on-write issue..)

- 4K block writes are scaling well with O_SYNC and O_DIRECT, but still remaining poor with other options

- 16K writes are reporting some anomalies: while with O_SYNC nothing is going wrong and it's scaling well, with O_DIRECT there is some kind of serialization happened on 4 and 16 concurrent user processes.. - and then on 64 users things then came back to the normal.. Interesting that is was not observed with 4K block writes.. Which remains me the last year discussion about page block size in InnoDB for SSD, and the gain reported by using 4K page size vs 16K.. - just keep in mind that sometimes it may be not related to SSD at all, but just to some filesystem's internals ;-))

- anyway, no doubt - if you have to use O_DIRECT in your MySQL server - use XFS! :-)

Now, what is the difference between a "default" XFS configuration and "tuned" ??..

I've recreated XFS with 64MB log size and mounted with following options:

# mount -t xfs -o noatime,nodiratime,nobarrier,logbufs=8,logbsize=32k

The results are following..

Write-Only @XFS-tuned:

Observations :

- everything is similar to "default" config, except that there is no more problem with 16K block size performance

- and probably this 16K anomaly observed before is something random, hard to say.. - but at least I saw it, so cannot ignore ;-))

Then, keeping in mind that XFS is so well performing on 1K block size, I was curious to see if thing will not go even better if I'll create my XFS filesystem with 1K block size instead of default 4K..

Write-Only @XFS-1K:

Observations :

- when XFS is created with 1K block size there is no more read-on-write issue on 1K writes..

- and we're really writing 1K..

- however, the performance is completely poor.. even on 1K writes with O_DIRECT !!!

- why?..

The answer is came from the Random Reads test on the same XFS, created with 1K block size.

Read-Only @XFS-1K:

Observations :

- if you followed me until now, you'll understand everything from the last graph, reporting RAM usage.. ;-))

- the previously 8GB free RAM is no more free here..

- so, XFS is not using O_DIRECT here!

- and you may see also that for all reads except O_DIRECT, it's reading 4K for every 1K, which is abnormal..

Instead of SUMMARY

- I'd say the main point here is - "test your I/O subsystem performance before to deploy your MySQL server" ;-))

- avoid to use "cfq" I/O scheduler :-)

- if you've decided to use O_DIRECT flush method in your MySQL server - deploy your data on XFS..

- seems to me the main reason why people are using O_DIRECT with MySQL it's a willing to avoid to deal with various issues of filesystem cache.. - and there is probably something needs to be improved in the Linux kernel, no? ;-)

- could be very interesting to see similar test results on the other filesystems too..

- things may look better with a newer Linux kernel..

So far, I've got some answers to my WHY questions.. Will be fine now to get a time to test it directly with MySQL ;-)

Any comments are welcome!

Rgds,

-Dimitri

Tuesday, 21 April, 2009

MySQL Performance: 5.4 outperforms PostgreSQL 8.3.7 @dbSTRESS !

Forget to say, I've also tested PostgreSQL 8.3.7 during the last benchmark serie with dbSTRESS!

A big surprise - if two years ago on the same workload PostgreSQL was two times faster (see: http://dimitrik.free.fr/db_STRESS_BMK_Part2_ZFS.html ), now it's MySQL 5.4 outperforming PostgreSQL!

-

Read-Only workload: MySQL is near two times faster now! (13.500

TPS vs ~7.000 TPS for PostgreSQL)

- Read+Write workload: MySQL performs as well or better (7.000-8.000 TPS vs 6.000-7.000 TPS for PostgreSQL)

For more details: http://dimitrik.free.fr/db_STRESS_MySQL_540_and_others_Apr2009.html#note_5443