« June 2009 | Main | April 2009 »

Monday, 25 May, 2009

MySQL Performance: InnoDB Dirty Pages & Log Size Impact

The beginning of this story is coming from the first weeks of the last year - seeking for the most optimal MySQL config parameters I've discovered a strange thing:

- my dirty pages percentage setting 15 was completely ignored by InnoDB during my tests!...

- once the test workload was finished it still took 30 minutes yet to flush dirty pages!...

I've told about a lot of people on that time, but everybody were just surprised and did not really believe such case is possible... Agree, it's hard to believe ;-)

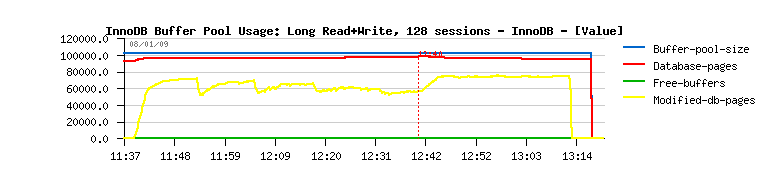

So, to refresh the story, let me first to show you the following picture:

As you may see here the dirty pages percentage is higher than 15% for sure :-) As well you may see the 30 minutes of dirty pages flushing once the workload is finished (too much according to me on the idle server! - and probably recovery process will take even more time if my server will crash?...)

It looked abnormal for me, and what I found on that time - reducing log size to 128MB gave less or more better stability and dirty pages flushing always finished in few minutes at the end of each workload!

My understanding was :

- the dirty pages percentage is completely ignored by InnoDB

- the only thing which limit a number of dirty pages is the log size!

- once redo log has no more free blocks it'll force dirty pages flushing to to continue its work!

- the most optimal redo log size I've found on that time was 128MB

So, all my MySQL tests I've done until now were executed with innodb_log_file_size=128M. And I've started the current study to bring some lights on previously found issue and whenever possible to understand what's going wrong...

The full report about this study you may find here: http://dimitrik.free.fr/db_STRESS_MySQL_InnoDB_dirty_pages_and_log_size_impact_May2009.html

Hope it explains well what's going wrong with InnoDB on my tests. I'm also curious if somebody else met similar problems, or probably discovering them now :-) I think the found bug is important because it's touching InnoDB internals and when things are not working at all as expected - it's important to know. As well seeing buffer pool cache wasted was not fun either...

Don't know how easy it'll be to fix, but maybe you already have your idea? ;-)

Any comments are welcome! :-)

Monday, 18 May, 2009

So, Why finally PostgreSQL is slower on db_STRESS Benchmark comparing to MySQL ?...

Last month when I've published my results obtained with PostgreSQL 8.3.7 on db_STRESS benchmark I was surprised they were much lower comparing to MySQL, as well some signs alarmed me there is something goes wrong with PostgreSQL... So, once it was possible, I took my time and prepared another testing on the same M5000 server I published MySQL results last week.

I would say I discovered a lot of new things benchmarking PostgreSQL ! I'm not kidding :-) And without going too much in detail, the main gain seems to me of MySQL over PostgreSQL was a lower cost on executing a single query. The query in question was the second SELECT in db_STRESS.

SELECT-2 execution time:

- MySQL 5.4: 0.44ms

- PostgreSQL 8.4: 1.3ms

- PostgreSQL 8.4 prepared statement: 0.98ms

Again, if PostgreSQL being slower on a single query scaled much more far on say 24 cores - it'll be not really a problem. But the result on 24 cores is only slightly better than on 16, and still lower comparing to MySQL... Of course, it's only true since MySQL 5.4 :-)

However, PostgreSQL made a big progress and performs very well on the mixed workload (Read+Write). Since 8.3 version it has a kind of "delayed" checkpoint - instead to flush all modified data at one (like it was in 8.2 and earlier) it has an option to say "I give you N sec to flush all data, don't need to stress, but finish work on time!" - and it works very well! :-)

Here are some PostgreSQL results on db_STRESS (as usual, X-axis representing a number of concurrent users (sessions), Y-axis representing a TPS level) - oh, and "prep" in name means prepared statements, buf4096 - means buffer pool set to 4096MB, etc. (all test descriptions are explained in the final report):

Read-Only Workload

Read+Write Workload

Scalability issue

As usually, all other details you may find in the full report: http://dimitrik.free.fr/db_STRESS_PostgreSQL_837_and_84_May2009.html

Any comments are welcome! :-)

Tuesday, 12 May, 2009

MySQL Performance: MySQL 5.4, XtraDB-5 and others @dbSTRESS Benchmark

Here are my new db_STRESS results obtained on the latest Sun M5000 server (SPARC64-VII 8CPU quad-core / bi-thread 2400Mhz). I was very curious to retest the "official" MySQL 5.4 on this machine as well other versions and InnoDB engines. But on the same time the new XtraDB version 5 was announced. As well Google shipped their v3 patch. How to resist? :-) (I'll try to be short :-))

MySQL 5.0 & 5.1

There is no more performance gap between 5.0 and 5.1 as I observed before , both versions are having near the same performance and both are way slower comparing to others :-) So, honestly, if you have any performance problem - why do not migrate to 5.4, XtraDB, or at least the latest InnoDB plugin?..

XtraDB Performance Improvements

I was very pleasantly surprised by performance improvements done in XtraDB-5! It's more performant comparing to the XtraDB-3, and absolutely free of freezes observed with XtraDB-4 on my tests. And it outperforms InnoDB plugin as well. Great work!

I've tested them all, and also executed a special run with Adaptive Checkpoint disabled - after Vadim' post I wanted to be sure I did not test XtraDB in bad conditions (from what I saw until now Adaptive Checkpoint always gave me the best result). Result of this test is labeled "XtraDB-5-AdpOFF", and as you may see it's better to keep Adaptive Checkpoint enabled :-) Also, in the middle of my tests Percona uploaded the new XtraDB code update, I've labeled it "XtraDB-5.1" to differentiate it from the previous one :-)

The only question I don't have an answer: why on changing innodb thread concurrency from 0 to 16 there is a so huge performance drop from 17.500 TPS to 12.000 TPS.. (there was no drop with XtraDB-3 before and now)

Google v3 patch

Even if it was very interesting to port Google v3 patched MySQL to Solaris, I was not sure at all about the final build :-) And as by several signs I supposed it doesn't work as it should - I did not publish here any results with this code. However from the full report you may follow my steps and probably correct and advice me if I'm doing something wrong...

Some of my Final Results

I'm presenting only some of my final results here, but all other results and more details you may find in the full report . The winner is still MySQL 5.4 here. But from the full report you may find a single case when XtraDB is outpassed all others :-)

Few graphs about scalability

There is a very small performance gain when moving from 8 to 16 cores: only 25% on Read-Only workload, and near nothing on Read+Write. But as I said before the progress is huge because there is no more performance regression either! :-) So for me the current goal in MySQL code improvement should be to obtain at least 75% performance gain between 8 and 16 cores, not agree? ;-))

The complete full report is here: http://dimitrik.free.fr/db_STRESS_MySQL_540_and_others_May2009.html

Any comments are welcome! :-)