« January 2013 | Main | October 2012 »

Friday, 16 November, 2012

MySQL Performance: InnoDB vs MyISAM in 5.6

Since the latest changes made recently within InnoDB code (MySQL 5.6) to

improve OLTP Read-Only performance + support of full text search (FTS),

I was curious to compare it now with MyISAM..

While there was no

doubt that using MyISAM as a storage engine for a heavy RW workloads may

become very quickly problematic due its table locking on write design,

the Read-Only workloads were still remaining favorable for MyISAM due

it's extreme simplicity in data management (no transaction read views

overhead, etc.), and specially when FTS was required, where MyISAM until

now was the only MySQL engine capable to cover this need.. But then FTS

came into InnoDB, and the open question for me is now: is there still

any reason to use MyISAM for RO OLTP or FTS wokloads from performance

point of view, or InnoDB may now cover this stuff as well..

For

my test I will use:

- Sysbench for OLTP RO workloads

- for FTS - slightly remastered test case with "OHSUMED" data set (freely available on Internet)

- All the tests are executed on the 32cores Linux box

- As due internal MySQL / InnoDB / MyISAM contentions some workloads may give a better results if MySQL is running within a less CPU cores, I've used Linux "taskset" to bind mysqld process to a fixed number of cores (32, 24, 16, 8, 4)

Let's get a look on the FTS performance first.

The OHSUMED test contains a less than 1GB data set and 30 FTS similar queries, different only by the key value they are using. However not every query is returning the same number of rows, so to keep the avg load more comparable between different tests, I'm executing the queries in a loop rather to involve them randomly.

The schema is the following:

CREATE TABLE `ohsumed_innodb` ( `docid` int(11) NOT NULL, `content` text, PRIMARY KEY (`docid`) ) ENGINE=InnoDB DEFAULT CHARSET=latin1; CREATE TABLE `ohsumed_myisam` ( `docid` int(11) NOT NULL, `content` text, PRIMARY KEY (`docid`) ) ENGINE=MyISAM DEFAULT CHARSET=latin1; alter table ohsumed_innodb add fulltext index ohsumed_innodb_fts(content); alter table ohsumed_myisam add fulltext index ohsumed_myisam_fts(content);

And the FTS query is looking like this:

SQL> SELECT count(*) as cnt FROM $(Table) WHERE match(content) against( '$(Word)' );

The $(Table) and $(Word) variables are replaced on fly during the test

depending which table (innoDB or MyISAM) and which key word is used

during the given query.

And there are 30 key words, each one

bringing the following number of records in the query result:

------------------------------------------------------------ Table: ohsumed_innodb ------------------------------------------------------------ 1. Pietersz : 6 2. REPORTS : 4011 3. Shvero : 4 4. Couret : 2 5. eburnated : 1 6. Fison : 1 7. Grahovac : 1 8. Hylorin : 1 9. functionalized : 4 10. phase : 6676 11. Meyers : 157 12. Lecso : 0 13. Tsukamoto : 34 14. Smogorzewski : 5 15. Favaro : 1 16. Germall : 1 17. microliter : 170 18. peroxy : 5 19. Krakuer : 1 20. APTTL : 2 21. jejuni : 60 22. Heilbrun : 9 23. athletes : 412 24. Odensten : 4 25. anticomplement : 5 26. Beria : 1 27. coliplay : 1 28. Earlier : 2900 29. Gintere : 0 30. Abdelhamid : 4 ------------------------------------------------------------

Results are exactly the same for MyISAM and InnoDB, while the response

times are not. Let's go in details now.

FTS : InnoDB vs MyISAM

The following graphs are representing the results obtained with:

- MySQL is running on 32, 24, 16, 8, 4 cores

- Same FTS queries are executed non-stop in a loop by 1, 2, 4, .. 256 concurrent users

- So, the first part of graphs is representing 1-256 users test on 32 cores

- The second one the same, but on 24 cores, and so on..

- On the first graph, once again, Performance Schema (PFS) is helping us to understand internal bottlenecks - you'll see the wait events reported by PFS

- And query/sec (QPS) reported by MySQL on the second one

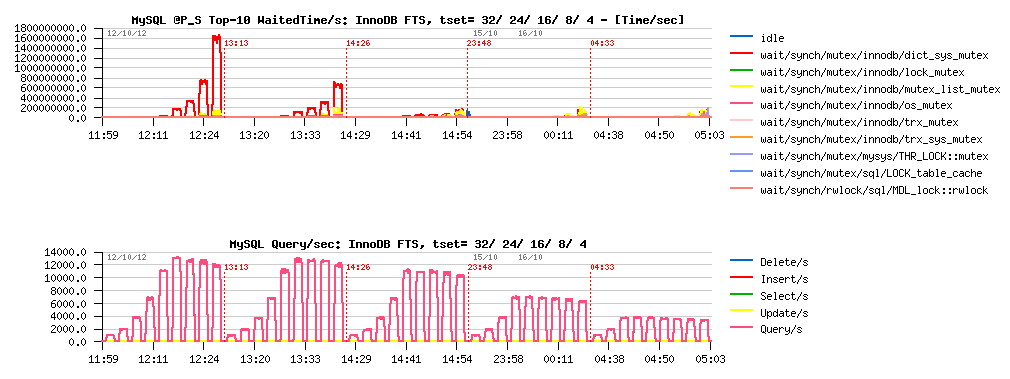

InnoDB FTS :

Observations :

- InnoDB FTS is scaling well from 4 to 16 cores, then performance is only slightly increased due contention on the dictionary mutex..

- However, there is no regression up to 32 cores, and performance continues to increase

- The best result is 13000 QPS on 24 or 32 cores

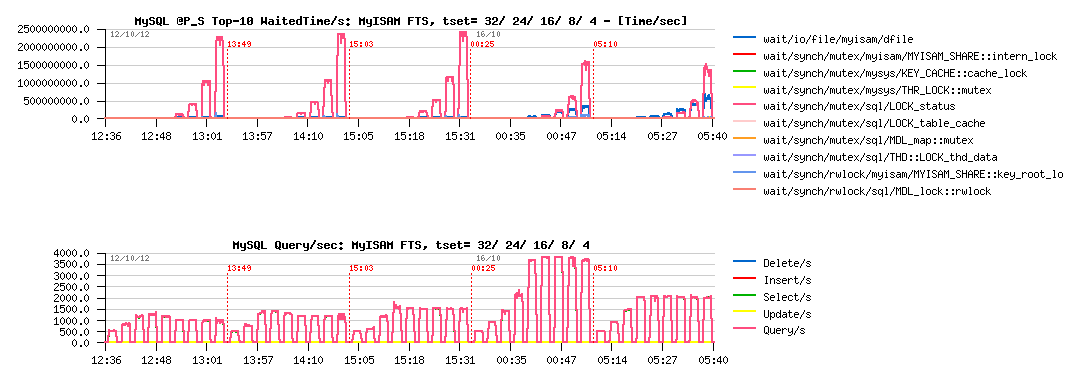

MyISAM FTS :

Observations :

- MyISAM FTS is scaling only from 4 to 8 cores, and then drop in regression with more cores..

- The main contention is on the LOCK_status mutex

- The best result is 3900 QPS on 8 cores

What about this LOCK_status mutex contention?.. - it gives an impression of a killing bottleneck, and if was resolved, would give an expectation to see MyISAM scale much more high and maybe see 16000 QPS on 32 cores?..

Well, I'd prefere a real result rather an expectation here ;-) So, I've opened MyISAM source code and seek for the LOCK_status mutex usage. In fact this mutex is mainly used to protect table status and other counters. Sure this code can be implemented better to avoid any blocking on counters at all. But my goal here is just to validate the potential impact of potential fix -- supposing there is no more contention on this mutex, what kind of the result may we expect then??

So, I've compiled an experimental MySQL binary having call to LOCK_status mutex commented within MyISAM code, and here is the result:

MyISAM-noLock FTS :

Observations :

- LOCK_status contention is gone

- But its place is taken now by data file read waits... - keeping in mind that all data are already in the file system cache...

- So, the result is slightly better, but data file contention is killing scalability

- Seems like absence of its own cache buffer for data is the main show-stopper for MyISAM here (while FTS index is well cached and key buffer is bigger than enough)..

- The best result now is 4050 QPS still obtained on 8 cores

-

NOTE :

- using mmap() (myisam_use_mmap=1) did not help here, and yet added MyISAM mmap_lock contention

- interesting that during this RO test performance on MyISAM was better when XFS was used and worse on EXT4 (just thinking about another point in XFS vs EXT4 discussion for MySQL) -- particularly curious because whole data set was cached by the filesystem..

So far:

- InnoDB FTS is at least x3 times faster on this test vs MyISAM

- As well x1.5 times faster on 8 cores where MyISAM shows its top result, and x2 times faster on 4cores too..

- And once dictionary mutex lock contention will be fixed, InnoDB FTS performance will be yet better!

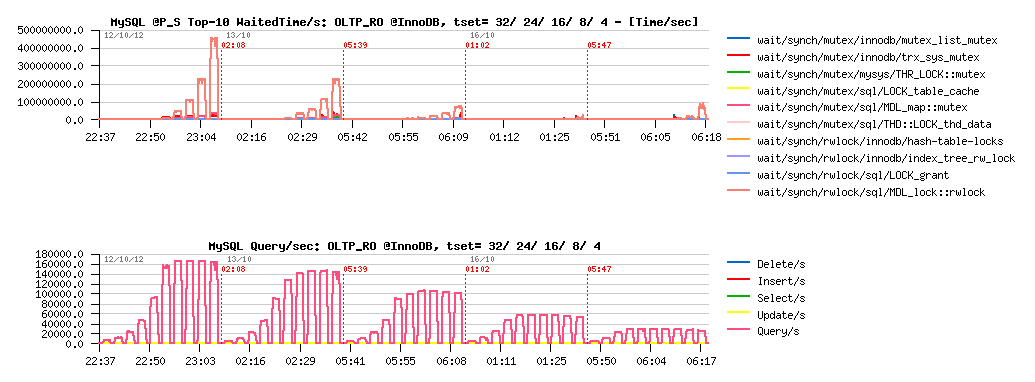

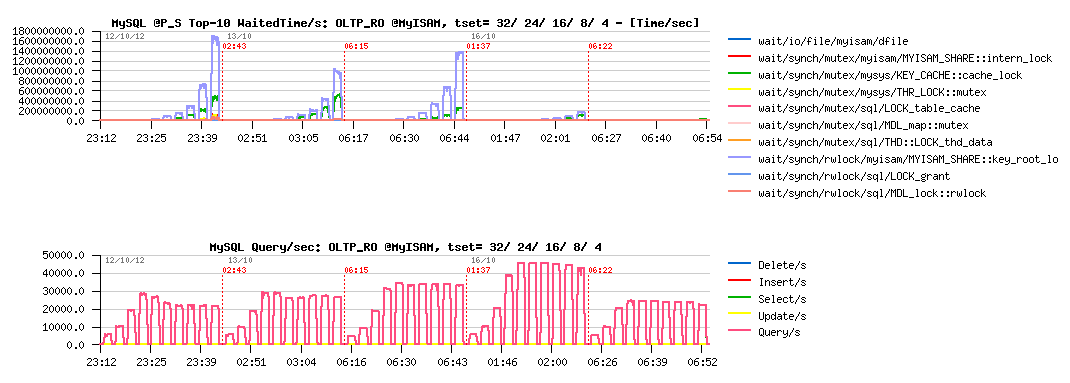

OLTP Read-Only : InnoDB vs MyISAM

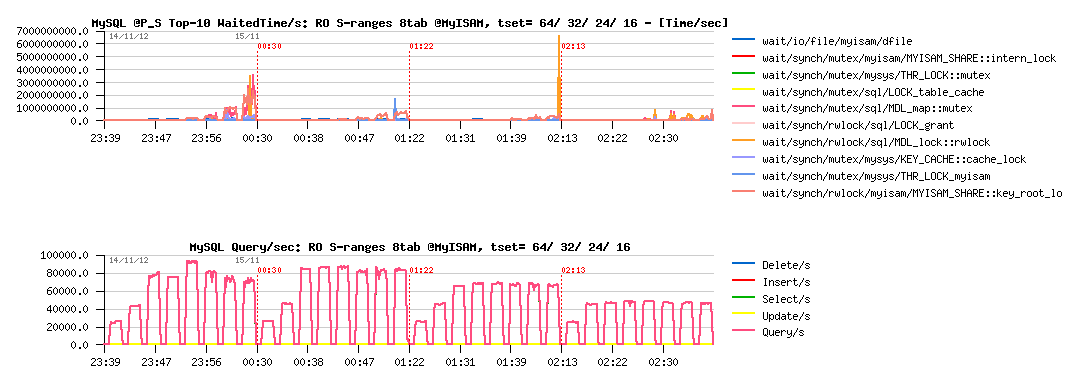

As a start point, I've used "classic" Sysbench OLTP workloads, which are accessing a single table in a database. Single table access is not favorable for MyISAM, so I will even not comment each result, will just note that:

- the main bottleneck in MyISAM during this test is on the "key_root_lock" and "cache_lock" mutex

- if I understood well, the solution to fix "cache_lock" contention in such a workload was proposed with cache segments in MariaDB

- however, it may work only in the POINT SELECTS test (where cache_lock contention is the main bottleneck)

- while in all other tests the "key_root_lock" contention is dominating and for the moment remains not fixed..

- using partitioned table + having per partition key buffer should help here MyISAM, but I'll simply use several tables in the next tests

- InnoDB performance is only limited by MDL locks (MySQL layer), so expected to be yet better once MDL code will be improved

- in the following tests InnoDB is x3-6 times faster than MyISAM..

Sysbench OLTP_RO @InnoDB :

Sysbench OLTP_RO @MyISAM :

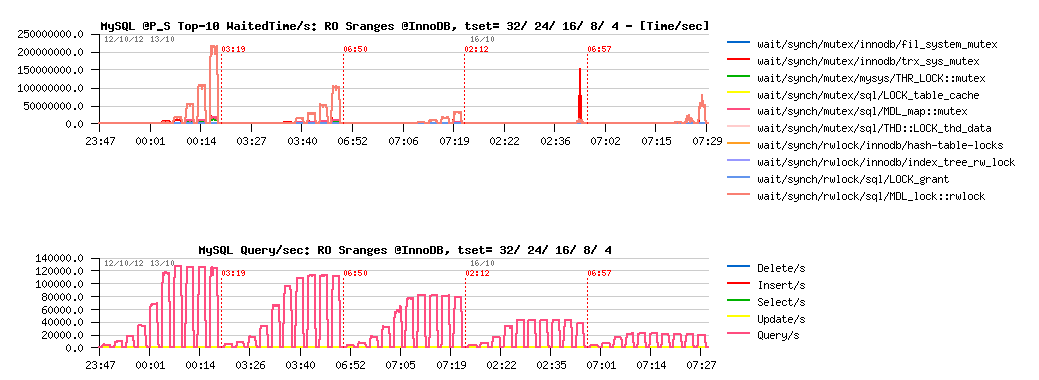

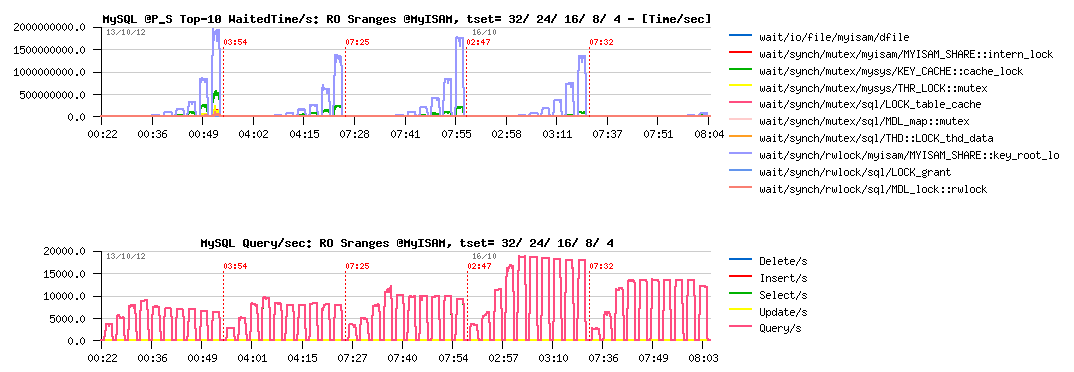

Sysbench Simple-Ranges @InnoDB :

Sysbench Simple-Ranges @MyISAM :

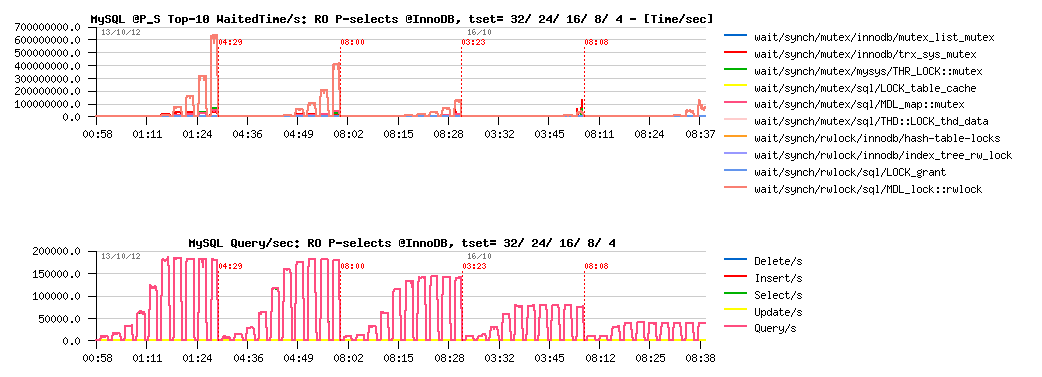

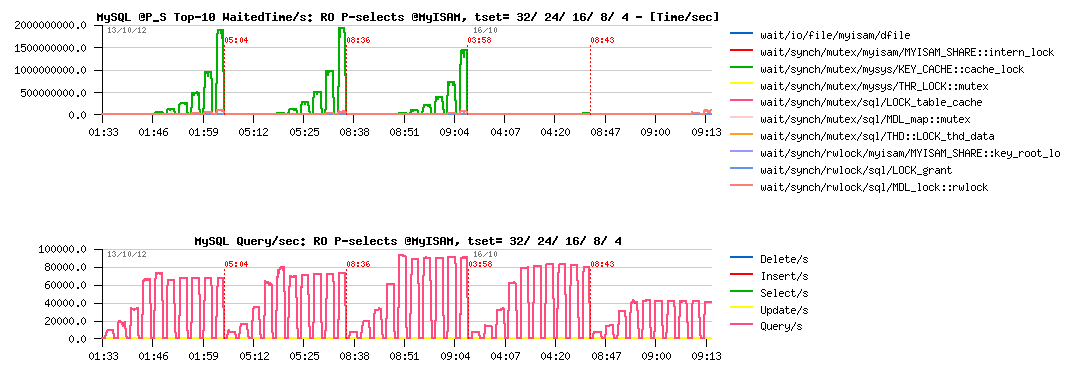

Sysbench Point-Selects @InnoDB :

Sysbench Point-Selects @MyISAM :

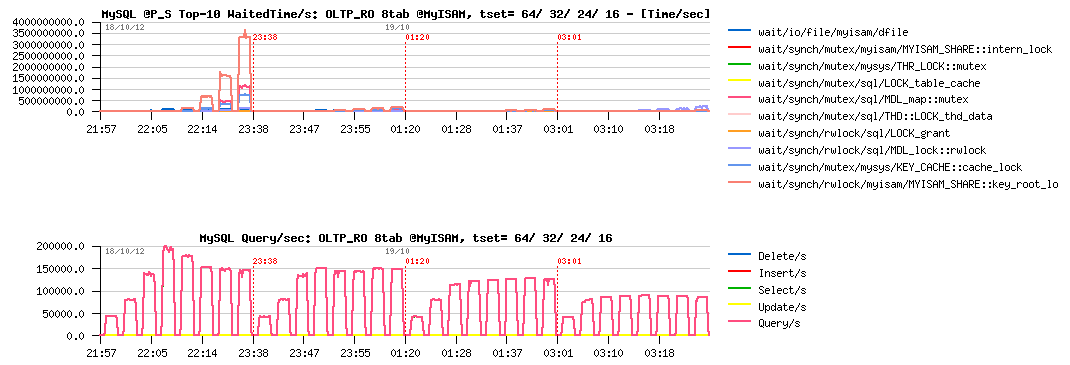

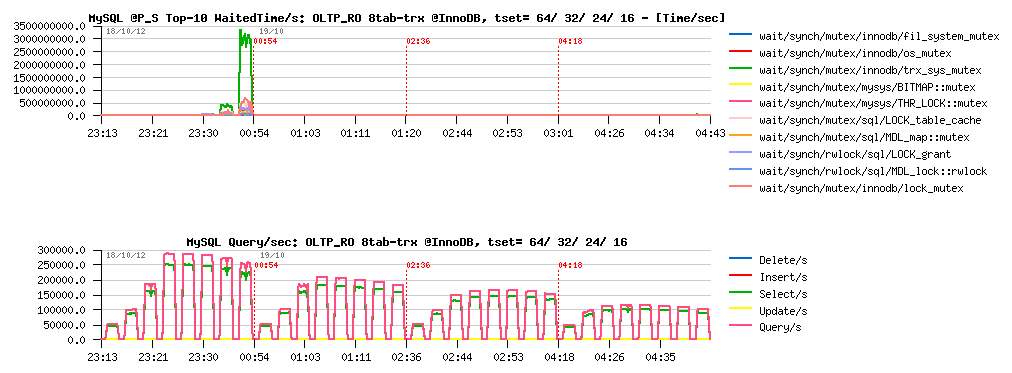

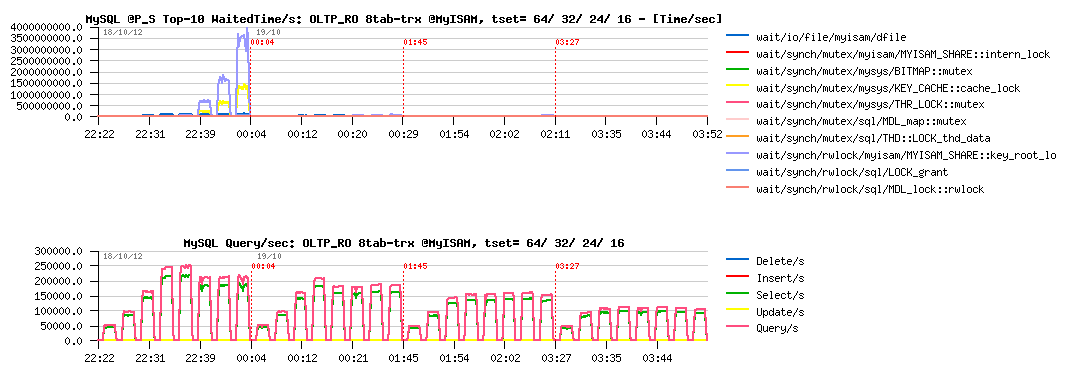

OLTP Read-Only with 8 tables : InnoDB vs MyISAM

Test with 8 tables become much more interesting, as it'll dramatically lower key_root_lock contention in MyISAM, and MDL contentions as well. However, we're hitting in MyISAM the key cache mutex contention, so there are 8 key buffers used (one per table) to avoid it. Then, scalability is pretty good on all these tests, so I'm limiting test cases to 64, 32, 24 and 16 cores (64 - means 32cores with both threads enabled (HT)). As well, concurrent users are starting from 8 to use all 8 tables at time.

Let's get a look on OLTP_RO workload first :

Sysbench OLTP_RO 8-tables @InnoDB :

Sysbench OLTP_RO 8-tables @MyISAM :

Observations :

- InnoDB is still better on OLTP_RO than MyISAM..

- for InnoDB, the main bottleneck seems to be on the MDL related part

- for MyISAM - key_root_lock is still here (not as much as before, but still blocking)

- InnoDB is reaching 215K QPS max, and MyISAM 200K QPS

- As you see, speed-up is very significant for both storage engines when activity is not focused on a single table..

And to finish with this workload, let me present you the "most curious" case ;-) -- this test is getting a profit from the fact that within auto-commit mode MySQL code is opening and closing table(s) on every query, while if BEGIN / END transactions statements are used, table(s) are opened since BEGIN and closed only at the END statement, and as OLTP_RO "transaction" contains several queries, this is giving a pretty visible speep-up! Which is even visible on MyISAM tables as well ;-)

So, I'm just turning transactions option "on" within Sysbench OLTP_RO:

Sysbench OLTP_RO 8-tables TRX=on @InnoDB :

Sysbench OLTP_RO 8-tables TRX=on @MyISAM :

Observations :

- InnoDB is going from 215K to 250K QPS

- MyISAM is going from 200K to 220K QPS

- there is definitively something to do with it.. ;-))

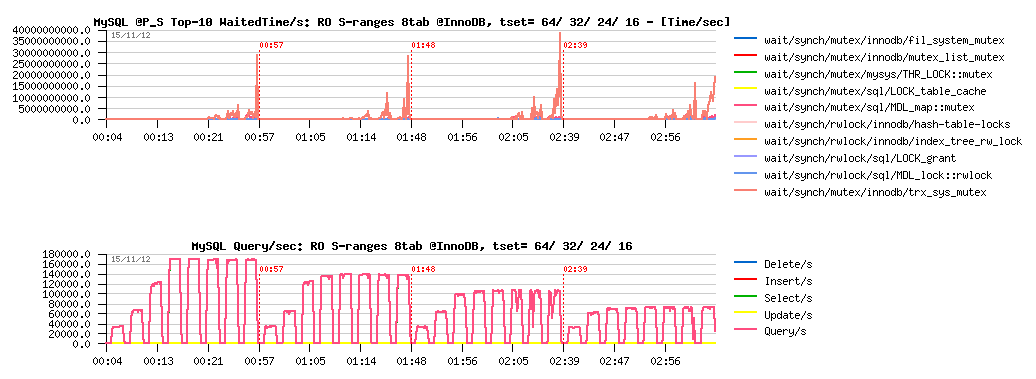

Now, what about SIMPLE-RANGES workload?

Sysbench RO Simple-Ranges 8-tables @InnoDB :

Sysbench RO Simple-Ranges 8-tables @MyISAM :

Observations :

- InnoDB is reaching 170K QPS here, mainly blocked by MDL related stuff..

- MyISAM is getting only 95K QPS max, seems to be limited by key_root_lock contention..

So far, InnoDB won over MyISAM on every presented test cases until here.

But get a look now on one case where MyISAM is still better..

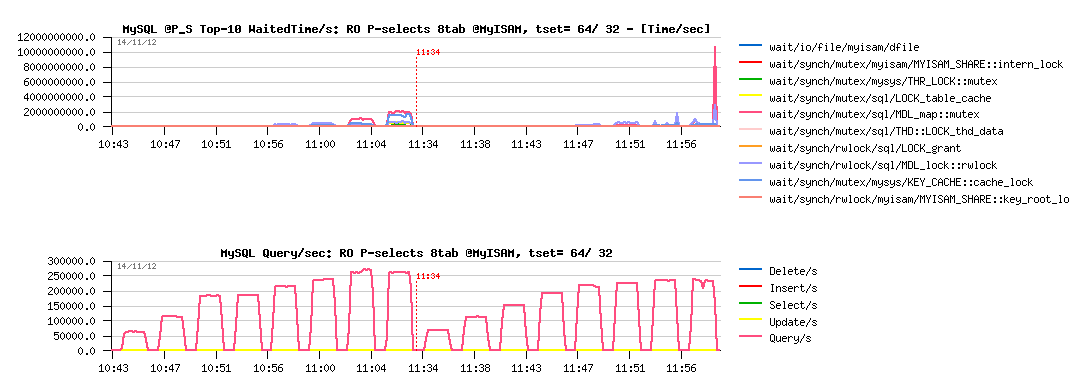

POINT-SELECTS WITH 8 TABLES

I'm dedicating a separate chapter for this particular test workload, as it was the only case I've tested where MyISAM out-passed InnoDB in performance, so required more detailed analyze here.. Both storage engines are scaling really well on this test, so I'm limiting result graphs to 64 (HT) and 32 cores configurations only.

Let's get a look on MyISAM results on MySQL 5.6-rc1 :

Sysbench RO Point-Selects 8-tables @MyISAM 5.6-rc1 :

Observations :

- MyISAM is reaching 270K QPS max on this workload

- and starting to hit MDL-related contentions here!

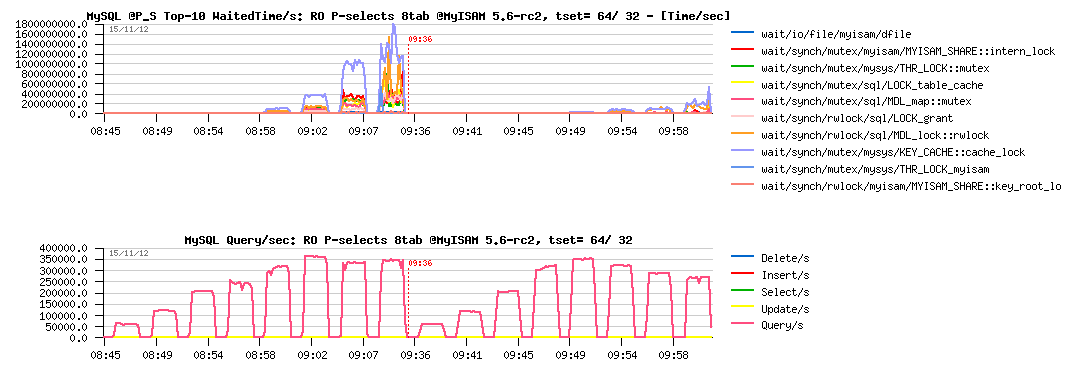

While MySQL 5.6-rc2 already contains the first part of MDL optimizations ("metadata_locks_hash_instances"), and we may expect a better results now on workloads having MDL_map::mutex contention in the top position. So, let's see hot it helps MyISAM here.

Sysbench RO Point-Selects 8-tables @MyISAM 5.6-rc2 :

Observations :

- Wow! - 360K QPS max(!) - this is a very impressive difference :-)

- then key cache lock contention is blocking MyISAM from going more high..

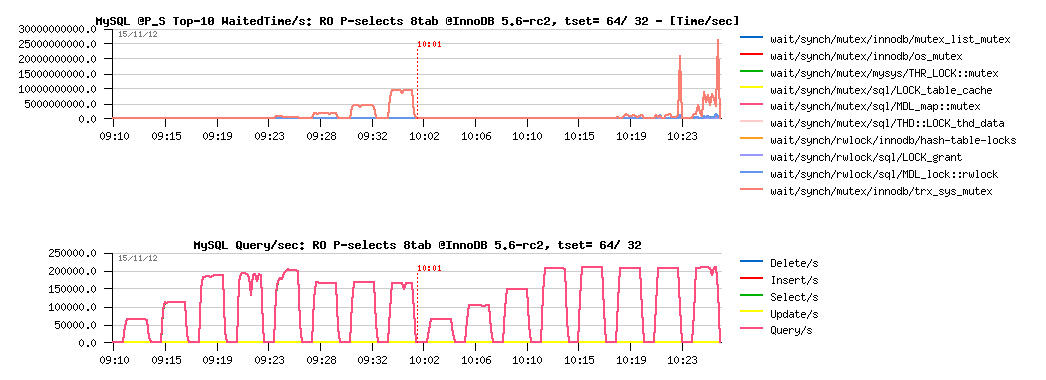

Then, what about InnoDB here?.. - the problem with InnoDB that even with getting a more light code path with READ ONLY transactions it'll still create/destroy read-view, and on such a workload with short and fast queries such an overhead will be seen very quickly:

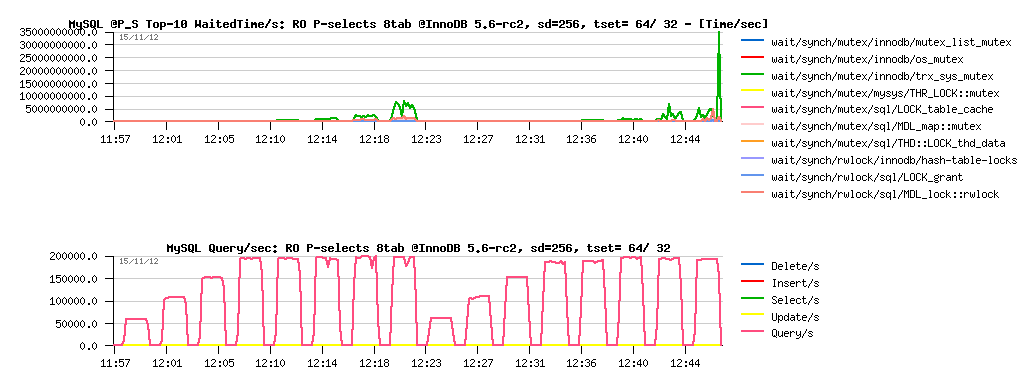

Sysbench RO Point-Selects 8-tables @InnoDB 5.6-rc2 :

Observations :

- InnoDB is reaching only 210K QPS max on this workload

- the main bottleneck is coming from trx_sys::mutex contention (related to read-views)

- this contention is even making a QPS drop on 64 cores threads (HT), so the result is better on pure 32cores..

Such a contention is still possible to hide (yes, "hide", which is different from "fix" ;-)) -- we may try to use a bigger "innodb_spin_wait_delay" value. The changes can be applied live on a running system as the setting is dynamic. Let's try now innodb_spin_wait_delay=256 instead of 96 that I'm using usually :

Sysbench RO Point-Selects 8-tables @InnoDB 5.6-rc2 sd=256 :

Observations :

- as you can see, the load is more stable now

- but we got a regression from 210K to 200K QPS..

So, a true fix for trx_sys mutex contention is really needing here to go more far. This work is in progress, so stay tuned ;-) Personally I'm expecting at least 400K QPS here on InnoDB or more (keeping in mind that MyISAM is going throw the same code path to communicate with MySQL server, having syscalls overhead on reading data from the FS cache, and still reaching 360K QPS ;-))

However, before to finish, let's see what are the max QPS numbers may be obtained on this server by reducing some overheads on internals:

- I'll disable Performance Schema instrumentation

- and use prepared statements to reduce SQL parser time..

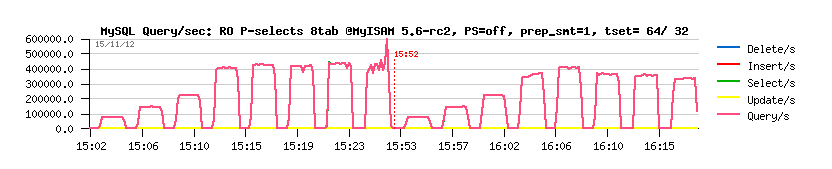

Sysbench RO Point-Selects 8-tables @MyISAM 5.6-rc2 PFS=off prep_smt=1 :

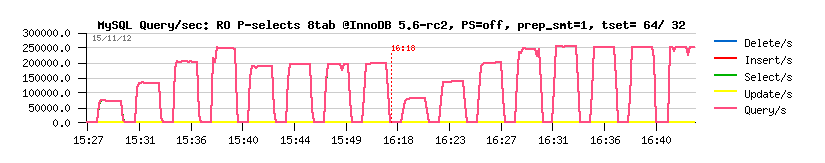

Sysbench RO Point-Selects 8-tables @InnoDB 5.6-rc2 PFS=off prep_smt=1 :

Observations :

- Wow! 430K (!) QPS max on MyISAM!...

- and 250K (!) QPS on InnoDB!

These results are great!.. - and both are coming due the great improvement made in MySQL 5.6 code.

(specially keeping in mind that just one year ago on the same server I was unable to get more than 100K QPS on InnoDB ;-))

While, anyway, I'm still willing to see something more better from InnoDB (even if I understand all these transactional related stuff constrains, and so on)..

So far, let me show you something ;-))

Starting from the latest MySQL 5.6 version, InnoDB has a "read-only" option -- to switch off all database writes globally for a whole InnoDB instance (innodb_read_only=1).. This option is working very similar to READ ONLY transactions today, while it should do much more better in the near future (because when we know there is no changes possible in the data, then any transaction related constraints may be ignored). And I think the READ ONLY transactions may yet work much more better than today too ;-))

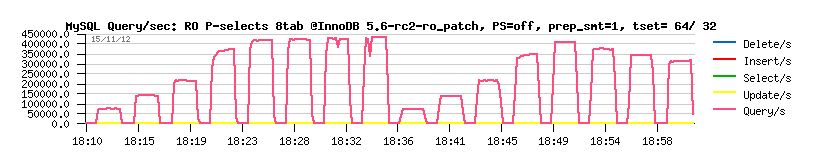

Sunny is working hard on improvement of all this part of code, and currently we have a prototype which is giving us the following on the same workload :

Sysbench RO Point-Selects 8-tables @InnoDB 5.6-rc2-ro_patch PFS=off prep_smt=1 :

Observations :

- as you can see, we're rising 450K (!) QPS within the same test conditions!!! :-)

- and it's yet on an old 32cores bi-thread server..

- it reminds me the famous 750K QPS on "Handler Socket".. - as you see, we become more and more close to it ;-)

- and still passing by a normal SQL and keeping all other RDBMS benefits ;-)

- so, for all users hesitating to use MySQL or move to noSQL land.. - you'll yet be surprised by MySQL power ;-))

INSTEAD OF SUMMARY

- InnoDB seems to be today way faster on FTS than MyISAM

- on OLTP RO workloads InnoDB is also faster than MyISAM, except on point selects, but this gap should be removed too in the near future ;-)

- if you did not try MySQL 5.6 yet -- please, do! -- it's already great, but with your feedback will be yet better! ;-)

And what kind of performance difference you're observing in your workloads?..

Please, share!..

Monday, 05 November, 2012

MySQL Performance: Linux I/O and Fusion-IO, Part #2

This post is the following part #2 of the previous

one - in fact Vadim's comments bring me in some doubts about the

possible radical difference in implementation of AIO vs normal I/O in

Linux and filesystems. As well I've never used Sysbench for I/O testing

until now, and was curious to see it in action. From the previous tests

the main suspect point was about random writes (Wrnd) performance on a

single data file, so I'm focusing only on this case within the following

tests. On XFS performance issues started since 16 concurrent IO write

processes, so I'm limiting the test cases only to 1, 2, 4, 8 and 16

concurrent write threads (Sysbench is multi-threaded), and for AIO

writes seems 2 or 4 write threads may be more than enough as each thread

by default is managing 128 AIO write requests..

Few words about

Sysbench "fileio" test options :

- As already mentioned, it's multithreaded, so all the following tests were executed with 1, 2, 4, 8, 16 threads

- Single 128GB data file is used for all workloads

- Random write is used as workload option ("rndwr")

- It has "sync" and "async" mode options for file I/O, and optional "direct" flag to use O_DIRECT

- For "async" there is also a "backlog" parameter to say how many AIO requests should be managed by a single thread (default is 128, and what is InnoDB is using too)

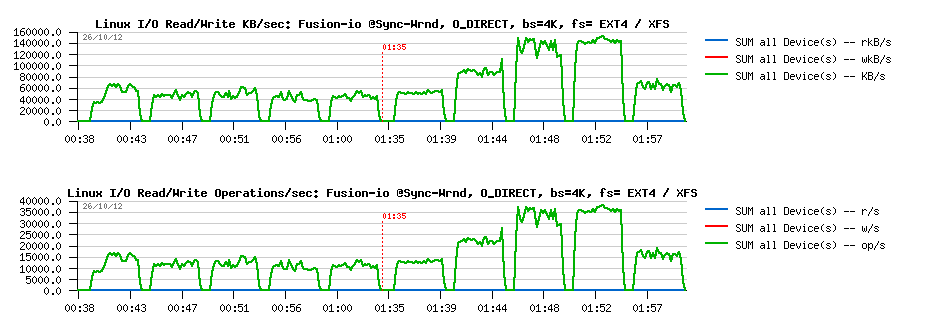

So, lets try with "sync" + "direct" random writes first, just to check if I will observe the same things as in my previous tests with IObench before:

Sync I/O

Wrnd "sync"+"direct" with 4K block size:

Observations :

- Ok, the result is looking very similar to before:

- EXT4 is blocked on the same write level for any number of concurrent threads (due IO serialization)

- while XFS is performing more than x2 times better, but getting a huge drop since 16 concurrent threads..

Wrnd "sync"+"direct" with 16K block size :

Observations :

- Same here, except that the difference in performance is reaching x4 times better result for XFS

- And similar drop since 16 threads..

However, things are changing radically when AIO is used ("async" instead of "sync").

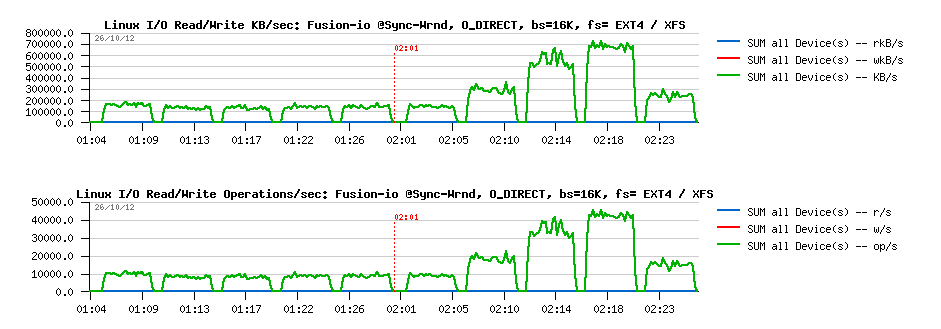

Async I/O

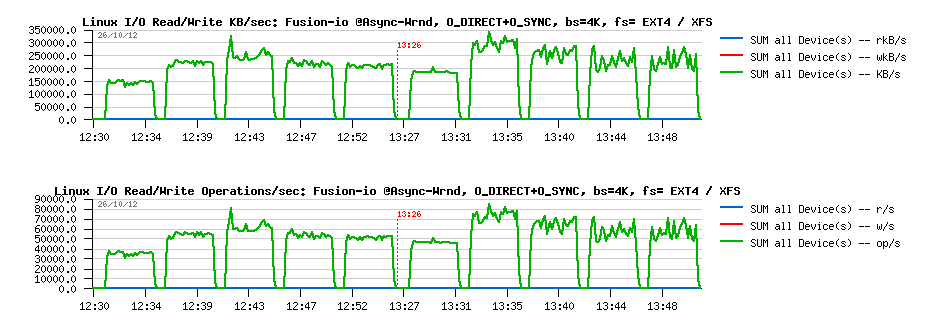

Wrnd "async"+"direct" with 4K block size:

Observations :

- Max write performance is pretty the same for both file systems

- While EXT4 remains stable on all threads levels, and XFS is hitting a regression since 4 threads..

- Not too far from the RAW device performance observed before..

Wrnd "async"+"direct" with 16K block size:

Observations :

- Pretty similar as with 4K results, except that regression on XFS is starting since 8 threads now..

- Both are not far now from the RAW device performance observed in previous tests

From all points of view, AIO write performance is looking way better! While I'm still surprised by a so spectacular transformation of EXT4.. - I have some doubts here if something within I/O processing is still not buffered within EXT4, even if the O_DIRECT flag is used. And if we'll read Linux doc about O_DIRECT implementation, we may see that O_SYNC should be used in addition to O_DIRECT to guarantee the synchronous write:

" O_DIRECT (Since Linux 2.4.10)Try to minimize cache effects of the I/O to and from this file. Ingeneral this will degrade performance, but it is useful in specialsituations, such as when applications do their own caching. File I/Ois done directly to/from user space buffers. The O_DIRECT flag on itsown makes at an effort to transfer data synchronously, but does notgive the guarantees of the O_SYNC that data and necessary metadata aretransferred. To guarantee synchronous I/O the O_SYNC must be used inaddition to O_DIRECT. See NOTES below for further discussion. "

(ref: http://www.kernel.org/doc/man-pages/online/pages/man2/open.2.html)

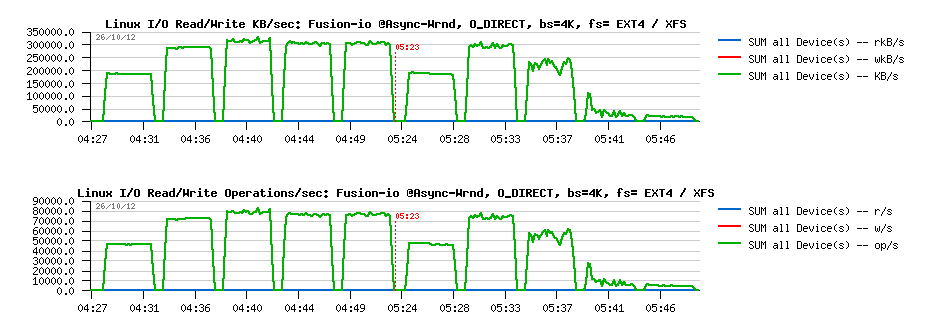

Sysbench is not opening file with O_SYNC when O_DIRECT is used ("direct" flag), so I've modified modified Sysbench code to get these changes, and then obtained the following results:

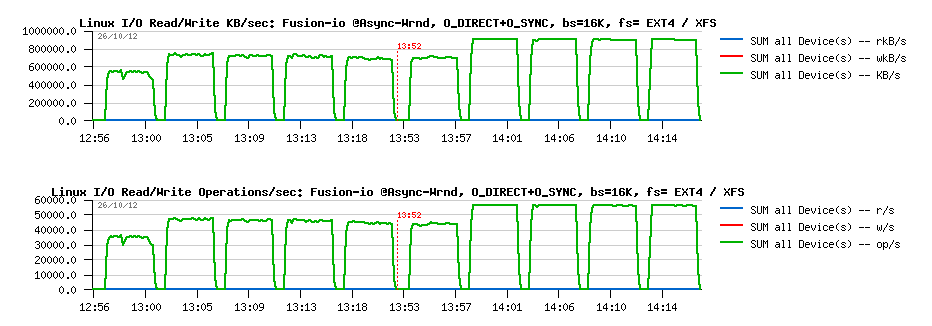

Async I/O : O_DIRECT + O_SYNC

Wrnd "async"+"direct"+O_SYNC with 4K block size:

Observations :

- EXT4 performance become lower.. - 25% a cost for O_SYNC, hmm..

- while XFS surprisingly become more stable and don't have a huge drop observed before..

- as well, XFS is out performing EXT4 here, while we may still expect some better stability in results..

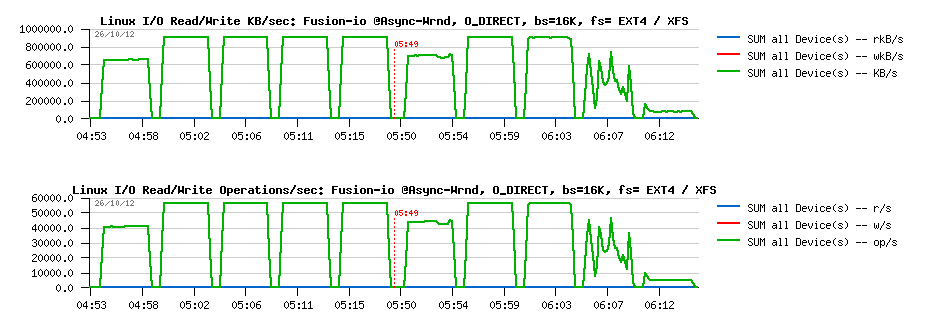

Wrnd "async"+"direct"+O_SYNC with 16K block size:

Observations :

- while with 16K block size, both filesystems showing rock stable performance levels

- but XFS is doing better here (over 15% better performance), and reaching its max performance without O_SYNC

I'm pretty curious what kind of changes are going within XFS code path when O_SYNC is used in AIO and why it "fixed" initially observed drops.. But seems to me for security reasons O_DIRECT should be used along with O_SYNC within InnoDB (and looking in the source code, seems it's not yet the case, or we should add something like O_DIRECT_SYNC for users who are willing to be more safe with Linux writes, similar to O_DIRECT_NO_FSYNC introduced in MySQL 5.6 for users who are not willing to enforce writes with additional fsync() calls)..

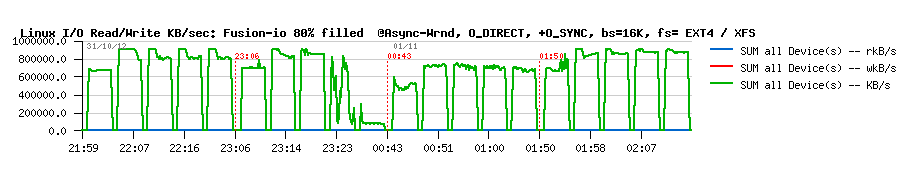

And at the end, specially for Mark Callaghan, a short graph with results on the same tests with 16K block size, but while the filesystem space is filled up to 80% (850GB from the whole 1TB space in Fusion-io flash card):

Wrnd AIO with 16K block size while 80% of space is filled :

So, there is some regression on every test, indeed.. - but maybe not as big as we should maybe afraid. I've also tested the same with TRIM mount option, but did not get better. But well, to see these 10% regression we should yet see if MySQL/InnoDB will be able to reach these performance levels first ;-))

Time to a pure MySQL/InnoDB heavy RW test now..