« MySQL Performance: Improved Adaptive Flushing in 5.6-labs | Main | Moving to DISQUS Comment system »

Friday, 13 April, 2012

MySQL Performance: 5.5 and 5.6-labs @TPCC-like

It's now over a year when there was a quite interesting exchange with

Vadim and other Percona guys due their TPCC-like benchmark results on

MySQL 5.5 - I've replayed on that time the same tests, but with a better

tuned configuration parameters to demonstrate that with a little bit

more love, MySQL

5.5 may keep TPCC-like workload very well ;-)) However, it was clear

that Adaptive Flushing in 5.5 needs

some improvement (which is now

implemented in 5.6), but there were also several open questions

regarding I/O level and O_DIRECT performance which I was able to clarify

for me only during last

year IO testing on a decent Linux box + storage..

Summarizing

all this stuff, you may understand my curiosity to see how well (or not)

MySQL 5.6-labs is running today on Percona's TPCC-like workload ;-))

But

let's start from the system setup first:

- so, I'm using a 32cores bi-thread Intel box, 128GB RAM

- running Oracle Linux 6.2

- storage: x3 SDD in RAID0

- filesystem: XFS mounted with "noatime,nodiratime,nobarrier,logbufs=8" options

Same TPCC-like workload as before:

- 500W data volume

- 32 concurrent users (initially it was only 16 on the previous tests, but I think it'll be pity to run only 16 users when you have 32cores Linux box ;-))

Then, my first test will be with MySQL 5.5 - even it's GA since more than one year and no any major changes allowed to the code, it was still improved over a time and will give us a good idea about its state for today.. From the previous testing I've retained that to help Adaptive Flushing in 5.5 the "innodb_io_capacity" value should be set to a something huge -- as the algorithm in 5.5 is not following very well the REDO activity, we should avoid to limit it in IO capacity (and when it'll decide to do a big I/O request we should let it happen ;-))

MySQL 5.5 configuration:

[mysqld]

max_connections=4000

table_open_cache = 8000

back_log=1500

query_cache_type=0

# files

innodb_file_per_table

innodb_log_file_size=1024M

innodb_log_files_in_group=3

# buffers

innodb_buffer_pool_size=64000M

innodb_buffer_pool_instances=16

innodb_log_buffer_size=64M

# tune

innodb_checksums=0

innodb_doublewrite=0

innodb_support_xa=0

innodb_thread_concurrency=0

innodb_flush_log_at_trx_commit=2

innodb_flush_method= O_DIRECT

innodb_max_dirty_pages_pct=50

# perf special

innodb_adaptive_flushing=1

innodb_read_io_threads = 16

innodb_write_io_threads = 16

innodb_io_capacity = 20000

innodb_purge_threads=1

Note that I did not reduce this time the dirty pages percentage to enforce the dirty pages flushing - I'm expecting here that my I/O level will be fast enough to keep the flush activity aligned with REDO logs.. Let's see what is the result now ;-)

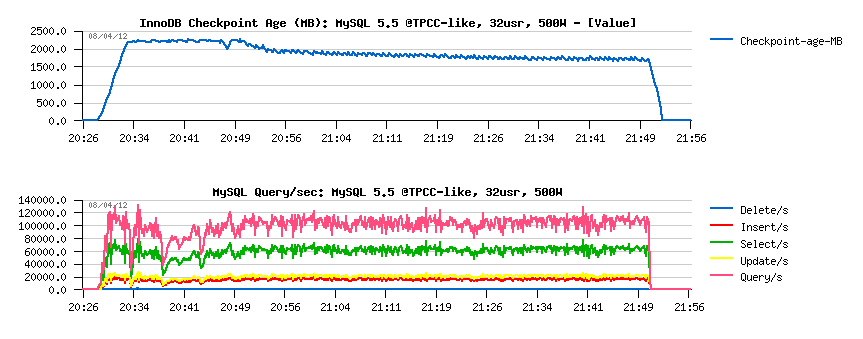

MySQL 5.5 results:

Observations :

- there are some initial QPS drops due critical levels in Checkpoint Age

- but over a time performance become more and more stable, reaching something not far from 110K QPS..

Well, lest move to MySQL 5.6.4 now - it was the last 5.6 release just before 5.6-labs, so Adaptive Flushing was still very similar to what we have in 5.5 (even if some improvement, like page_cleaner thread, were introduced).. So, nothing different within 5.6.4 configuration setup (same 20K IO capacity), except monitoring via METRICS table is enabled.

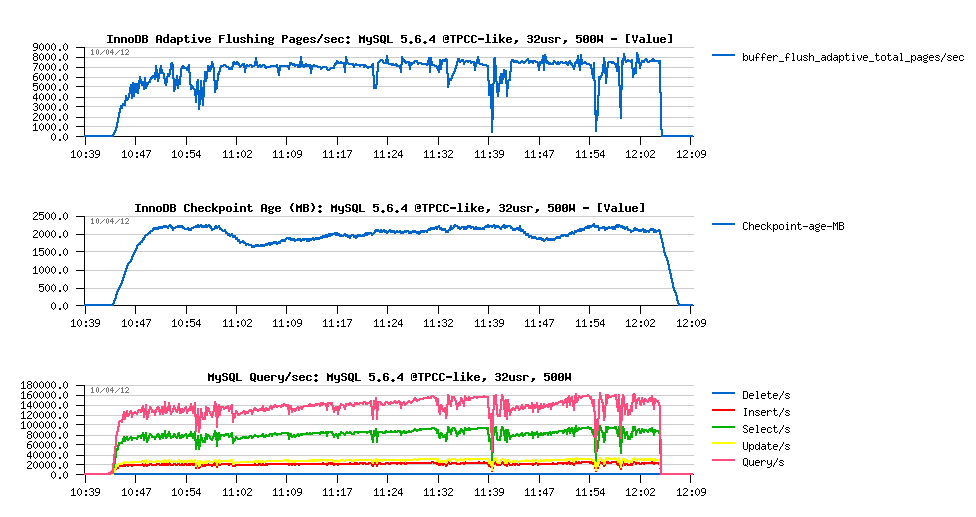

MySQL 5.6.4 results :

Observations :

- QPS performance is reaching 160K QPS over a time

- there are more activity drops comparing to 5.5..

- all QPS drops are related to reaching critical levels in Checkpoint Age.. - so it was a time to improve AF here ;-))

- we're reaching near 8000 pages/sec in flushing - good to know to adapt the Max IO capacity in 5.6-labs ;-)

MySQL 5.6-labs configuration:

- same as 5.5, except the following:

- innodb_io_capacity = 4000

- innodb_max_io_capacity = 12000

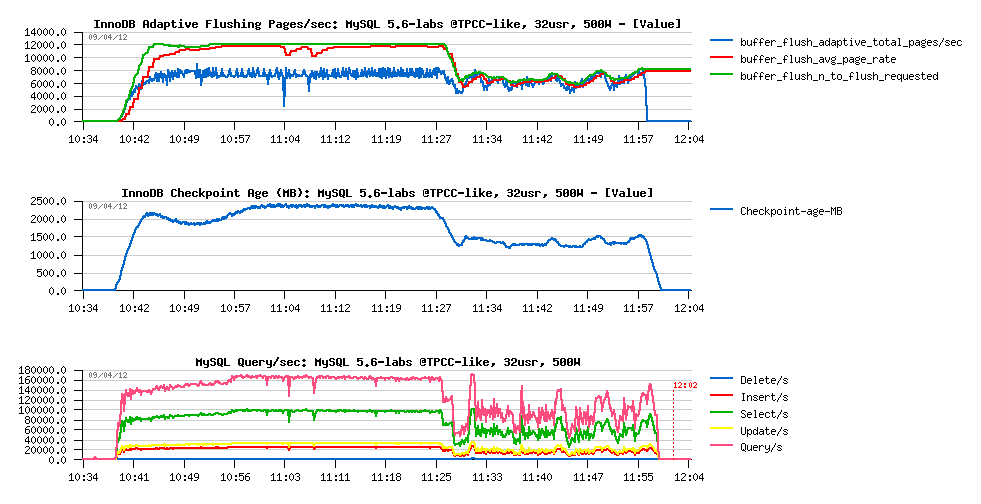

MySQL 5.6-labs results :

Observations

- seems we're unable to flush more than 8000 dirty pages/sec here.. (interesting why?..)

- so, nothing surprising that Checkpoint Age is remaining in critical level most of the time..

- however, there are near no QPS drops at all on the "good" part of graph (up to 11:25 ;-))

- we're reaching 170K QPS now !..

- but after 11:25 there is a huge performance drop.. - why?..

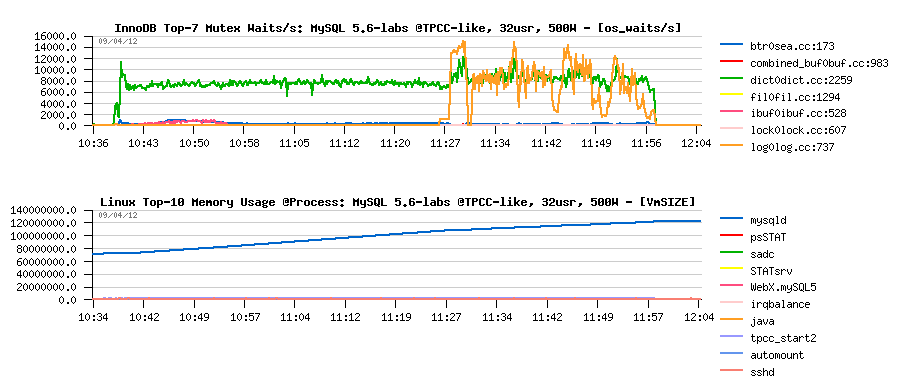

Let's look more in depth:

- the main current InnoDB bottleneck on TPCC-like workload is index lock contention..

- but since 11:25 there is a log_sys mutex contention which is jumping up too!..

- the only reason for log_sys to come in such a configuration is to get REDO log files out of filesystem cache (then on most of REDO I/O writes there will be read-on-write involved, because the REDO I/O operation is not aligned to the filesystem block size..) - this will be fixed soon in 5.6 too ;-)

- however, since I'm using O_DIRECT, and only MySQL is running on my server, what other I/O activity may remove REDO log files from the filesystem cache?...

The answer:

- from the graph above you may see that "mysqld" process is reaching 128GB memory usage by the end of test..

- of course, when there is no more memory on the system, there is no more place for REDO logs in the filesystem cache either ;-))

- comparing with 5.6.4, seems there was a memory leak bug introduced in 5.6 trunk recently ;-))

- so, I'm happy to find it, but keep it in mind for the moment when trying 5.6-labs on Read+Write workloads - I think it'll be fixed soon..

Well, I'm pretty happy with what I saw.. (except the memory leak bug of course ;-)) -- but I see now the potential we're having under 5.6-labs.. And with all other further improvement which are currently in pipe, MySQL 5.6 should be again yet another the best ever MySQL release after 5.5 !..

Stay tuned.. ;-)

Rgds,

-Dimitri

blog comments powered by DisqusNote: if you don't see any "comment" dialog above, try to access this page with another web browser, or google for known issues on your browser and DISQUS..